apt update && apt -y install bind9-utils expect rsync jq psmisc net-tools lvm2 vim unzip rename tree

[root@k8s-cluster241 ~]# cat >> /etc/hosts <<'EOF'

10.0.0.240 apiserver-lb

10.0.0.241 k8s-cluster241

10.0.0.242 k8s-cluster242

10.0.0.243 k8s-cluster243

EOF

[root@k8s-cluster241 ~]# cat > password_free_login.sh <<'EOF'

#!/bin/bash

# 创建密钥对

ssh-keygen -t rsa -P "" -f /root/.ssh/id_rsa -q

# 声明你服务器密码,建议所有节点的密码均一致,否则该脚本需要再次进行优化

export mypasswd=1

# 定义主机列表

k8s_host_list=(k8s-cluster241 k8s-cluster242 k8s-cluster243)

# 配置免密登录,利用expect工具免交互输入

for i in ${k8s_host_list[@]};do

expect -c "

spawn ssh-copy-id -i /root/.ssh/id_rsa.pub root@$i

expect {

\"*yes/no*\" {send \"yes\r\"; exp_continue}

\"*password*\" {send \"$mypasswd\r\"; exp_continue}

}"

done

EOF

bash password_free_login.sh

[root@k8s-cluster241 ~]# cat > /usr/local/sbin/data_rsync.sh <<'EOF'

#!/bin/bash

if [ $# -lt 1 ];then

echo "Usage: $0 /path/to/file(绝对路径) [mode: m|w]"

exit

fi

if [ ! -e $1 ];then

echo "[ $1 ] dir or file not find!"

exit

fi

fullpath=`dirname $1`

basename=`basename $1`

cd $fullpath

case $2 in

WORKER_NODE|w)

K8S_NODE=(k8s-cluster242 k8s-cluster243)

;;

MASTER_NODE|m)

K8S_NODE=(k8s-cluster242 k8s-cluster243)

;;

*)

K8S_NODE=(k8s-cluster242 k8s-cluster243)

;;

esac

for host in ${K8S_NODE[@]};do

tput setaf 2

echo ===== rsyncing ${host}: $basename =====

tput setaf 7

rsync -az $basename `whoami`@${host}:$fullpath

if [ $? -eq 0 ];then

echo "命令执行成功!"

fi

done

EOF

chmod +x /usr/local/sbin/data_rsync.sh

data_rsync.sh /etc/hosts

systemctl disable --now NetworkManager ufw

swapoff -a && sysctl -w vm.swappiness=0

sed -ri '/^[^#]*swap/s@^@#@' /etc/fstab

ln -svf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

cat >> /etc/security/limits.conf <<'EOF'

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

sed -i 's@#UseDNS yes@UseDNS no@g' /etc/ssh/sshd_config

sed -i 's@^GSSAPIAuthentication yes@GSSAPIAuthentication no@g' /etc/ssh/sshd_config

cat > /etc/sysctl.d/k8s.conf <<'EOF'

# 以下3个参数是containerd所依赖的内核参数

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv6.conf.all.disable_ipv6 = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system

cat <<EOF >> ~/.bashrc

PS1='[\[\e[34;1m\]\u@\[\e[0m\]\[\e[32;1m\]\H\[\e[0m\]\[\e[31;1m\] \W\[\e[0m\]]# '

EOF

source ~/.bashrc

free -h

apt -y install ipvsadm ipset sysstat conntrack

cat > /etc/modules-load.d/ipvs.conf << 'EOF'

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

br_netfilter

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

reboot

lsmod | grep --color=auto -e ip_vs -e nf_conntrack -e br_netfilter

uname -r

ifconfig

free -h

./install-docker.sh r

ip link del docker0

wget http://192.168.16.32/Resources/Docker/scripts/autoinstall-containerd-v1.6.36.zip

unzip autoinstall-containerd-v1.6.36.zip

bash install-containerd.sh i

[root@k8s-cluster241 ~]# ctr version

Client:

Version: v1.6.36

Revision: 88c3d9bc5b5a193f40b7c14fa996d23532d6f956

Go version: go1.22.7

Server:

Version: v1.6.36

Revision: 88c3d9bc5b5a193f40b7c14fa996d23532d6f956

UUID: 16ee6ed0-0714-43c1-b467-a9d6b50993f4

[root@k8s-cluster242 ~]# ctr version

Client:

Version: v1.6.36

Revision: 88c3d9bc5b5a193f40b7c14fa996d23532d6f956

Go version: go1.22.7

Server:

Version: v1.6.36

Revision: 88c3d9bc5b5a193f40b7c14fa996d23532d6f956

UUID: 9ea686b1-a131-4bd1-8950-fccad5871961

[root@k8s-cluster243 ~]# ctr version

Client:

Version: v1.6.36

Revision: 88c3d9bc5b5a193f40b7c14fa996d23532d6f956

Go version: go1.22.7

Server:

Version: v1.6.36

Revision: 88c3d9bc5b5a193f40b7c14fa996d23532d6f956

UUID: 9fa162b1-43d8-4108-a7fc-c3cc708b9606

wget https://github.com/etcd-io/etcd/releases/download/v3.5.22/etcd-v3.5.22-linux-amd64.tar.gz

或

wget http://192.168.16.32/Resources/Prometheus/softwares/etcd/etcd-v3.5.22-linux-amd64.tar.gz

[root@k8s-cluster241 ~]# tar -xf etcd-v3.5.22-linux-amd64.tar.gz -C /usr/local/bin etcd-v3.5.22-linux-amd64/etcd{,ctl} --strip-components=1

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# ll /usr/local/bin/etcd*

-rwxr-xr-x 1 root root 24072344 Mar 28 06:58 /usr/local/bin/etcd*

-rwxr-xr-x 1 root root 18419864 Mar 28 06:58 /usr/local/bin/etcdctl*

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# etcdctl version

etcdctl version: 3.5.22

API version: 3.5

[root@k8s-cluster241 ~]#

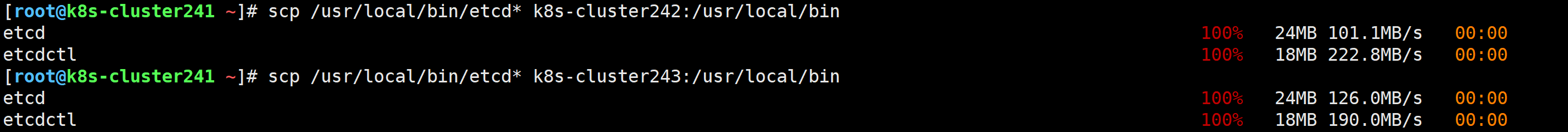

scp /usr/local/bin/etcd* k8s-cluster242:/usr/local/bin

scp /usr/local/bin/etcd* k8s-cluster243:/usr/local/bin

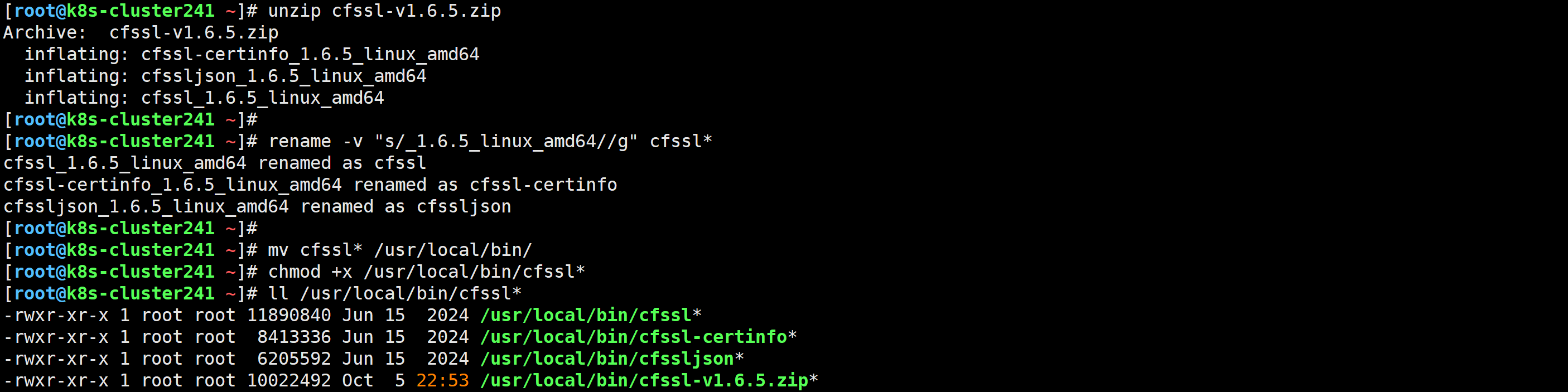

[root@k8s-cluster241 ~]# wget http://192.168.16.32/Resources/Prometheus/softwares/etcd/cfssl-v1.6.5.zip

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# unzip cfssl-v1.6.5.zip

Archive: cfssl-v1.6.5.zip

inflating: cfssl-certinfo_1.6.5_linux_amd64

inflating: cfssljson_1.6.5_linux_amd64

inflating: cfssl_1.6.5_linux_amd64

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# rename -v "s/_1.6.5_linux_amd64//g" cfssl*

cfssl_1.6.5_linux_amd64 renamed as cfssl

cfssl-certinfo_1.6.5_linux_amd64 renamed as cfssl-certinfo

cfssljson_1.6.5_linux_amd64 renamed as cfssljson

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# mv cfssl* /usr/local/bin/

[root@k8s-cluster241 ~]# chmod +x /usr/local/bin/cfssl*

[root@k8s-cluster241 ~]# ll /usr/local/bin/cfssl*

-rwxr-xr-x 1 root root 11890840 Jun 15 2024 /usr/local/bin/cfssl*

-rwxr-xr-x 1 root root 8413336 Jun 15 2024 /usr/local/bin/cfssl-certinfo*

-rwxr-xr-x 1 root root 6205592 Jun 15 2024 /usr/local/bin/cfssljson*

-rwxr-xr-x 1 root root 10022492 Oct 5 22:53 /usr/local/bin/cfssl-v1.6.5.zip*

[root@k8s-cluster241 ~]#

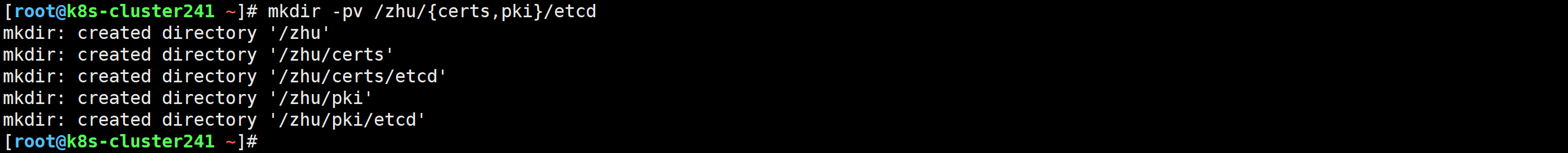

[root@k8s-cluster241 ~]# mkdir -pv /zhu/{certs,pki}/etcd

mkdir: created directory '/zhu'

mkdir: created directory '/zhu/certs'

mkdir: created directory '/zhu/certs/etcd'

mkdir: created directory '/zhu/pki'

mkdir: created directory '/zhu/pki/etcd'

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# cd /zhu/pki/etcd

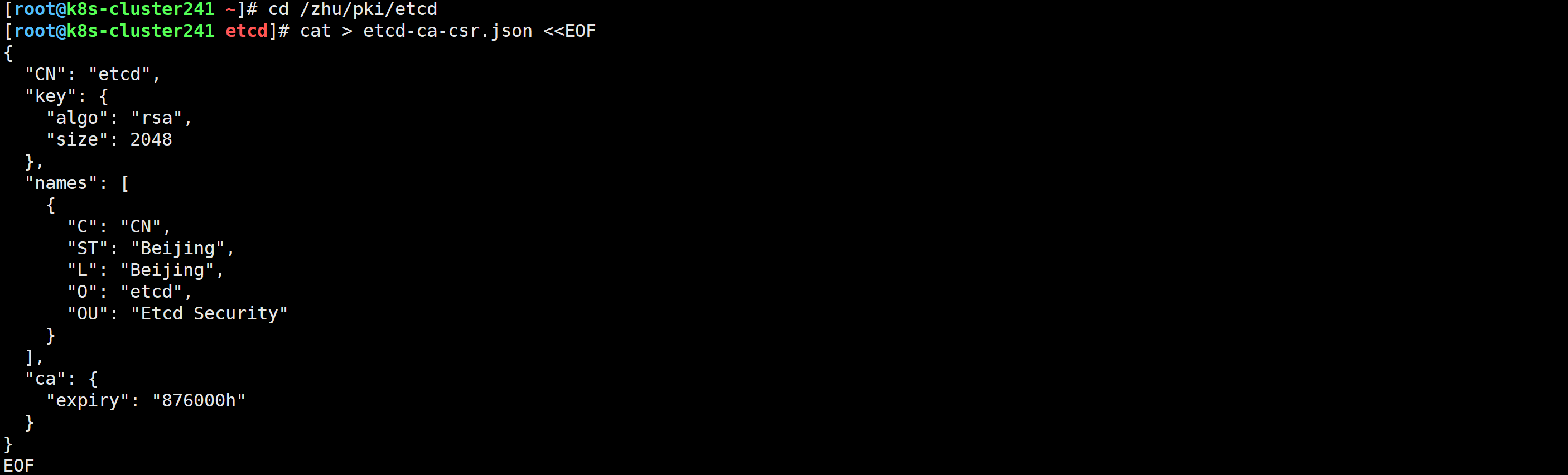

[root@k8s-cluster241 etcd]# cat > etcd-ca-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "etcd",

"OU": "Etcd Security"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF

[root@k8s-cluster241 etcd]# cfssl gencert -initca etcd-ca-csr.json | cfssljson -bare /zhu/certs/etcd/etcd-ca

2025/10/08 14:36:29 [INFO] generating a new CA key and certificate from CSR

2025/10/08 14:36:29 [INFO] generate received request

2025/10/08 14:36:29 [INFO] received CSR

2025/10/08 14:36:29 [INFO] generating key: rsa-2048

2025/10/08 14:36:29 [INFO] encoded CSR

2025/10/08 14:36:29 [INFO] signed certificate with serial number 328355904879817372340579796964724407291160984907

[root@k8s-cluster241 etcd]# ll /zhu/certs/etcd/etcd-ca*

-rw-r--r-- 1 root root 1050 Oct 8 14:36 /zhu/certs/etcd/etcd-ca.csr

-rw------- 1 root root 1679 Oct 8 14:36 /zhu/certs/etcd/etcd-ca-key.pem

-rw-r--r-- 1 root root 1318 Oct 8 14:36 /zhu/certs/etcd/etcd-ca.pem

[root@k8s-cluster241 etcd]# cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOF

[root@k8s-cluster241 etcd]# cat > etcd-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "etcd",

"OU": "Etcd Security"

}

]

}

EOF

[root@k8s-cluster241 etcd]# cfssl gencert \

-ca=/zhu/certs/etcd/etcd-ca.pem \

-ca-key=/zhu/certs/etcd/etcd-ca-key.pem \

-config=ca-config.json \

--hostname=127.0.0.1,k8s-cluster241,k8s-cluster242,k8s-cluster243,10.0.0.241,10.0.0.242,10.0.0.243 \

--profile=kubernetes \

etcd-csr.json | cfssljson -bare /zhu/certs/etcd/etcd-server

277634120345136896745263216364616485119989972622

[root@k8s-cluster241 etcd]#

[root@k8s-cluster241 etcd]# data_rsync.sh /zhu/certs/

===== rsyncing k8s-cluster242: certs =====

命令执行成功!

===== rsyncing k8s-cluster243: certs =====

命令执行成功!

[root@k8s-cluster242 ~]# tree /zhu/certs/etcd/

/zhu/certs/etcd/

├── etcd-ca.csr

├── etcd-ca-key.pem

├── etcd-ca.pem

├── etcd-server.csr

├── etcd-server-key.pem

└── etcd-server.pem

0 directories, 6 files

[root@k8s-cluster242 ~]#

[root@k8s-cluster243 ~]# tree /zhu/certs/etcd/

/zhu/certs/etcd/

├── etcd-ca.csr

├── etcd-ca-key.pem

├── etcd-ca.pem

├── etcd-server.csr

├── etcd-server-key.pem

└── etcd-server.pem

0 directories, 6 files

[root@k8s-cluster243 ~]#

[root@k8s-cluster241 etcd]# mkdir -pv /zhu/softwares/etcd

mkdir: created directory '/zhu/softwares'

mkdir: created directory '/zhu/softwares/etcd'

[root@k8s-cluster241 etcd]#

[root@k8s-cluster241 etcd]# cat /zhu/softwares/etcd/etcd.config.yml

name: 'k8s-cluster241'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.241:2380'

listen-client-urls: 'https://10.0.0.241:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.241:2380'

advertise-client-urls: 'https://10.0.0.241:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-cluster241=https://10.0.0.241:2380,k8s-cluster242=https://10.0.0.242:2380,k8s-cluster243=https://10.0.0.243:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/zhu/certs/etcd/etcd-server.pem'

key-file: '/zhu/certs/etcd/etcd-server-key.pem'

client-cert-auth: true

trusted-ca-file: '/zhu/certs/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/zhu/certs/etcd/etcd-server.pem'

key-file: '/zhu/certs/etcd/etcd-server-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/zhu/certs/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

[root@k8s-cluster241 etcd]#

[root@k8s-cluster242 ~]# mkdir -pv /zhu/softwares/etcd

mkdir: created directory '/zhu/softwares'

mkdir: created directory '/zhu/softwares/etcd'

[root@k8s-cluster242 ~]#

[root@k8s-cluster242 ~]# cat /zhu/softwares/etcd/etcd.config.yml

name: 'k8s-cluster242'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.242:2380'

listen-client-urls: 'https://10.0.0.242:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.242:2380'

advertise-client-urls: 'https://10.0.0.242:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-cluster241=https://10.0.0.241:2380,k8s-cluster242=https://10.0.0.242:2380,k8s-cluster243=https://10.0.0.243:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/zhu/certs/etcd/etcd-server.pem'

key-file: '/zhu/certs/etcd/etcd-server-key.pem'

client-cert-auth: true

trusted-ca-file: '/zhu/certs/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/zhu/certs/etcd/etcd-server.pem'

key-file: '/zhu/certs/etcd/etcd-server-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/zhu/certs/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

[root@k8s-cluster242 ~]#

[root@k8s-cluster243 ~]# mkdir -pv /zhu/softwares/etcd

mkdir: created directory '/zhu/softwares'

mkdir: created directory '/zhu/softwares/etcd'

[root@k8s-cluster243 ~]#

[root@k8s-cluster243 ~]# cat /zhu/softwares/etcd/etcd.config.yml

name: 'k8s-cluster243'

data-dir: /var/lib/etcd

wal-dir: /var/lib/etcd/wal

snapshot-count: 5000

heartbeat-interval: 100

election-timeout: 1000

quota-backend-bytes: 0

listen-peer-urls: 'https://10.0.0.243:2380'

listen-client-urls: 'https://10.0.0.243:2379,http://127.0.0.1:2379'

max-snapshots: 3

max-wals: 5

cors:

initial-advertise-peer-urls: 'https://10.0.0.243:2380'

advertise-client-urls: 'https://10.0.0.243:2379'

discovery:

discovery-fallback: 'proxy'

discovery-proxy:

discovery-srv:

initial-cluster: 'k8s-cluster241=https://10.0.0.241:2380,k8s-cluster242=https://10.0.0.242:2380,k8s-cluster243=https://10.0.0.243:2380'

initial-cluster-token: 'etcd-k8s-cluster'

initial-cluster-state: 'new'

strict-reconfig-check: false

enable-v2: true

enable-pprof: true

proxy: 'off'

proxy-failure-wait: 5000

proxy-refresh-interval: 30000

proxy-dial-timeout: 1000

proxy-write-timeout: 5000

proxy-read-timeout: 0

client-transport-security:

cert-file: '/zhu/certs/etcd/etcd-server.pem'

key-file: '/zhu/certs/etcd/etcd-server-key.pem'

client-cert-auth: true

trusted-ca-file: '/zhu/certs/etcd/etcd-ca.pem'

auto-tls: true

peer-transport-security:

cert-file: '/zhu/certs/etcd/etcd-server.pem'

key-file: '/zhu/certs/etcd/etcd-server-key.pem'

peer-client-cert-auth: true

trusted-ca-file: '/zhu/certs/etcd/etcd-ca.pem'

auto-tls: true

debug: false

log-package-levels:

log-outputs: [default]

force-new-cluster: false

[root@k8s-cluster243 ~]#

vim /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Service

Documentation=https://coreos.com/etcd/docs/latest/

After=network.target

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd --config-file=/zhu/softwares/etcd/etcd.config.yml

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

Alias=etcd3.service

systemctl daemon-reload && systemctl enable --now etcd

systemctl status etcd

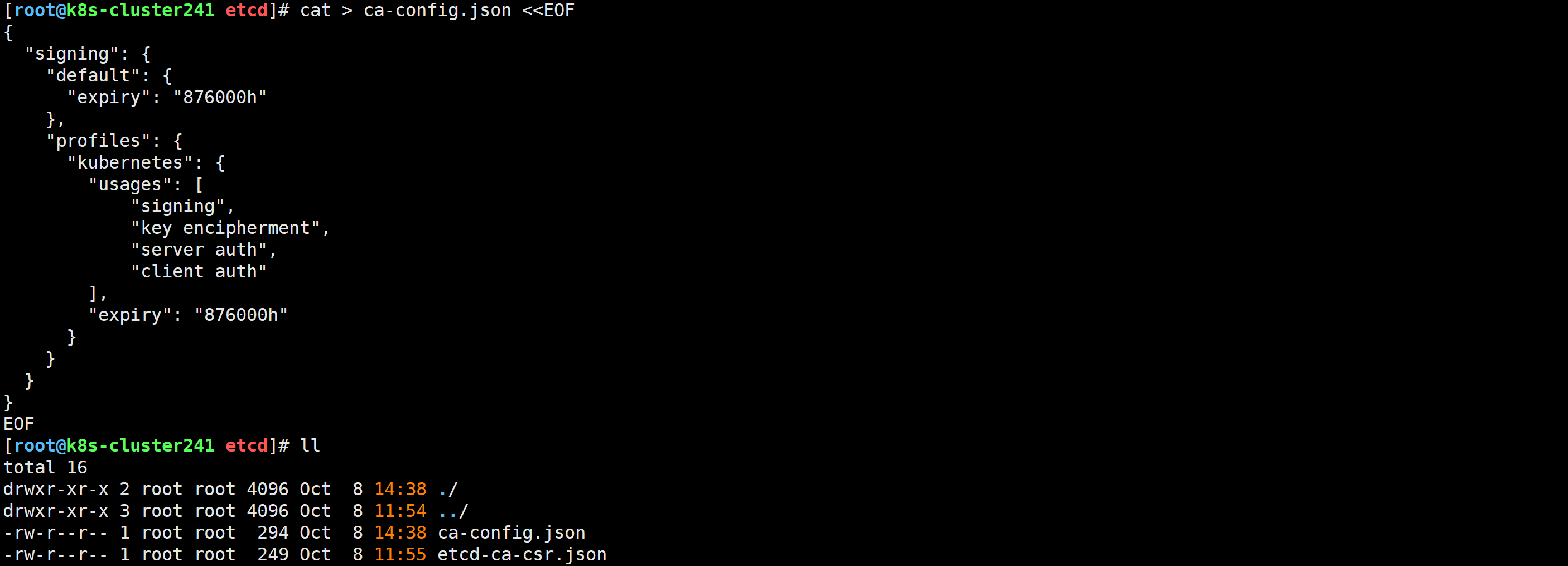

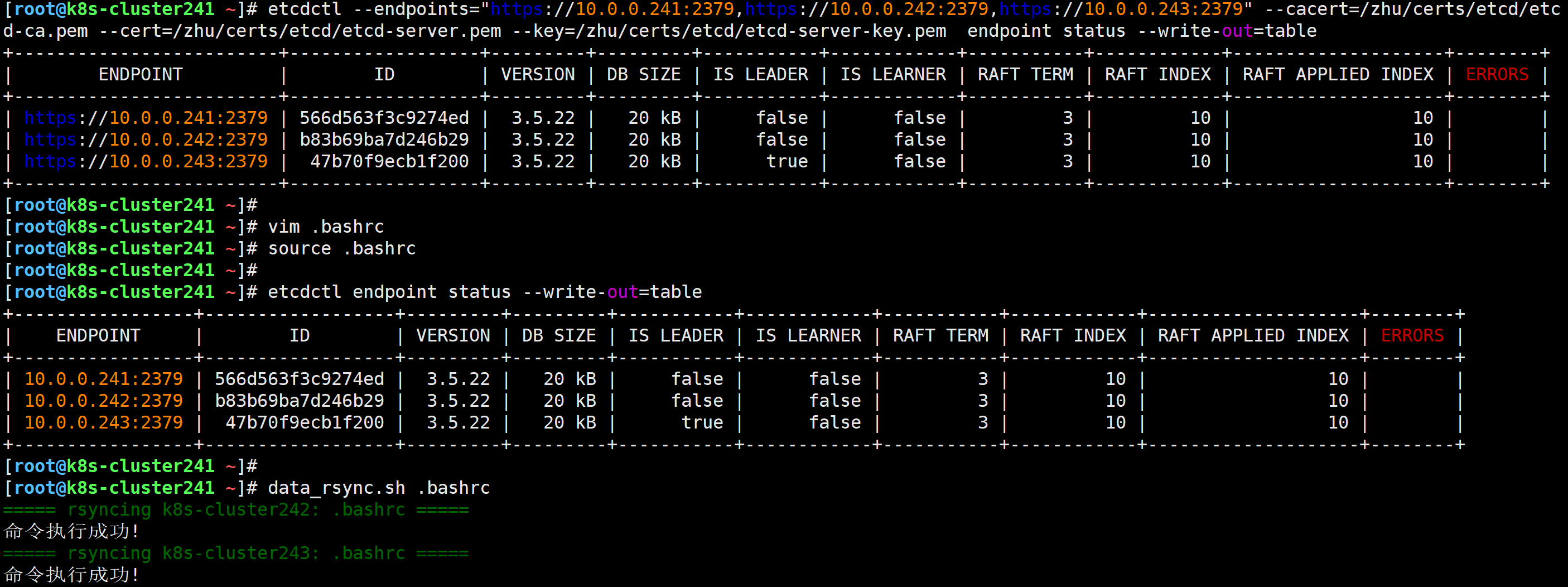

etcdctl --endpoints="https://10.0.0.241:2379,https://10.0.0.242:2379,https://10.0.0.243:2379" --cacert=/zhu/certs/etcd/etcd-ca.pem --cert=/zhu/certs/etcd/etcd-server.pem --key=/zhu/certs/etcd/etcd-server-key.pem endpoint status --write-out=table

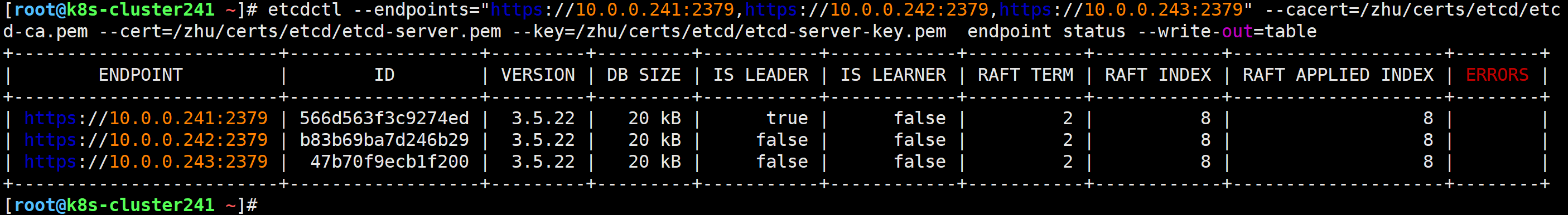

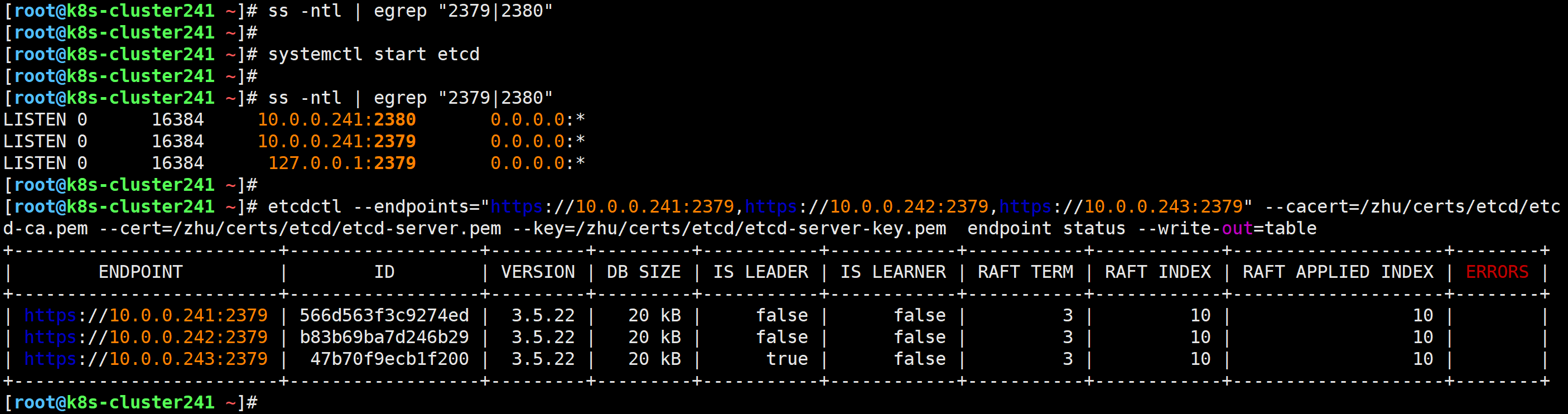

[root@k8s-cluster241 ~]# systemctl stop etcd

查看现有集群环境,发现新leader诞生

[root@k8s-cluster241 ~]# systemctl start etcd

[root@k8s-cluster241 ~]# vim .bashrc

...

alias etcdctl='etcdctl --endpoints="10.0.0.241:2379,10.0.0.242:2379,10.0.0.243:2379" --cacert=/zhu/certs/etcd/etcd-ca.pem --cert=/zhu/certs/etcd/etcd-server.pem --key=/zhu/certs/etcd/etcd-server-key.pem '

[root@k8s-cluster241 ~]# source .bashrc

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# etcdctl endpoint status --write-out=table

[root@k8s-cluster241 ~]# data_rsync.sh .bashrc

===== rsyncing k8s-cluster242: .bashrc =====

命令执行成功!

===== rsyncing k8s-cluster243: .bashrc =====

命令执行成功!

[root@k8s-cluster241 ~]#

wget https://dl.k8s.io/v1.33.3/kubernetes-server-linux-amd64.tar.gz

或

wget http://192.168.16.32/Resources/Kubernetes/K8S_Cluster/binary/v1.33/kubernetes-server-linux-amd64.tar.gz

[root@k8s-cluster241 ~]# tar xf kubernetes-server-linux-amd64.tar.gz --strip-components=3 -C /usr/local/bin kubernetes/server/bin/kube{let,ctl,-apiserver,-controller-manager,-scheduler,-proxy}

[root@k8s-cluster241 ~]# kube-apiserver --version

Kubernetes v1.33.3

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kube-proxy --version

Kubernetes v1.33.3

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kube-controller-manager --version

Kubernetes v1.33.3

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kube-scheduler --version

Kubernetes v1.33.3

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kubelet --version

Kubernetes v1.33.3

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kubectl version

Client Version: v1.33.3

Kustomize Version: v5.6.0

The connection to the server localhost:8080 was refused - did you specify the right host or port?

[root@k8s-cluster241 ~]#

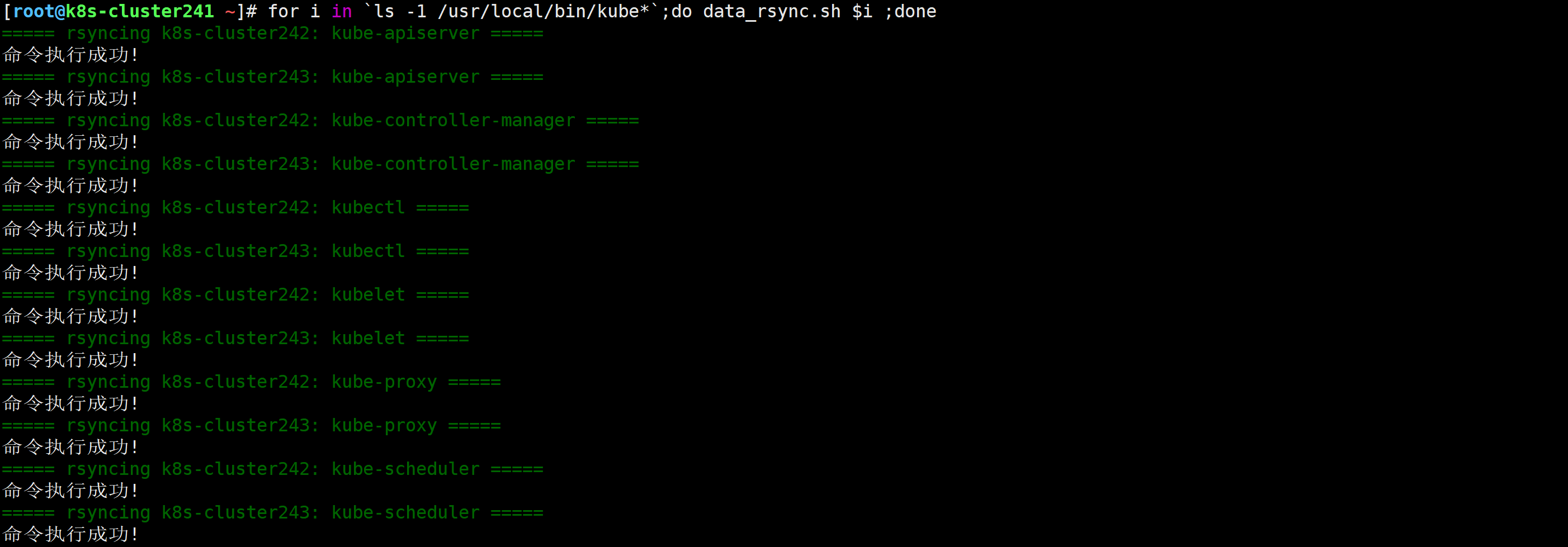

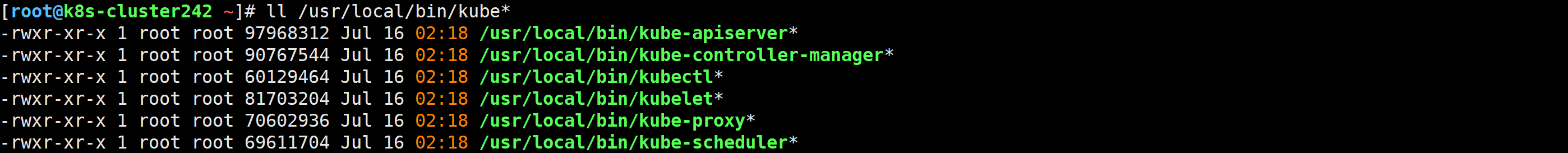

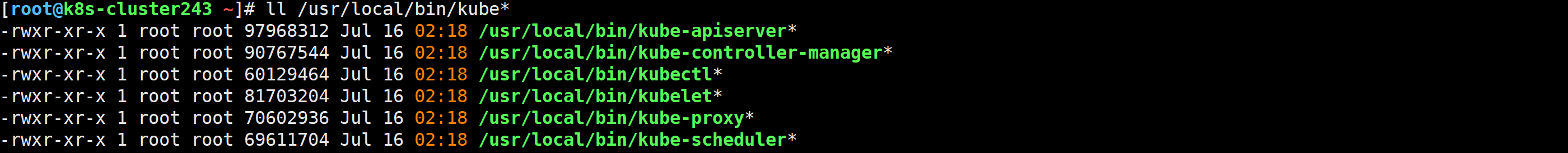

[root@k8s-cluster241 ~]# for i in `ls -1 /usr/local/bin/kube*`;do data_rsync.sh $i ;done

证书签发请求文件,配置了一些域名,公司,单位

[root@k8s-cluster241 ~]# mkdir -pv /zhu/pki/k8s && cd /zhu/pki/k8s

mkdir: created directory '/zhu/pki/k8s'

[root@k8s-cluster241 k8s]# cat > k8s-ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-manual"

}

],

"ca": {

"expiry": "876000h"

}

}

EOF

[root@k8s-cluster241 k8s]# mkdir -pv /zhu/certs/k8s/

mkdir: created directory '/zhu/certs/k8s/'

[root@k8s-cluster241 k8s]#

[root@k8s-cluster241 k8s]# cfssl gencert -initca k8s-ca-csr.json | cfssljson -bare /zhu/certs/k8s/k8s-ca

38351370854732679857293190732239378491349732440

[root@k8s-cluster241 k8s]#

[root@k8s-cluster241 k8s]# ll /zhu/certs/k8s/k8s-ca*

[root@k8s-cluster241 k8s]# cat > k8s-ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "876000h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "876000h"

}

}

}

}

EOF

证书签发请求文件,配置了一些域名,公司,单位

[root@k8s-cluster241 k8s]# cat > apiserver-csr.json <<EOF

{

"CN": "kube-apiserver",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "Kubernetes",

"OU": "Kubernetes-manual"

}

]

}

EOF

[root@k8s-cluster241 k8s]# cfssl gencert \

-ca=/zhu/certs/k8s/k8s-ca.pem \

-ca-key=/zhu/certs/k8s/k8s-ca-key.pem \

-config=k8s-ca-config.json \

--hostname=10.200.0.1,10.0.0.240,kubernetes,kubernetes.default,kubernetes.default.svc,kubernetes.default.svc.zhubl,kubernetes.default.svc.zhubl.xyz,10.0.0.241,10.0.0.242,10.0.0.243 \

--profile=kubernetes \

apiserver-csr.json | cfssljson -bare /zhu/certs/k8s/apiserver

88443181032593396701388594587814174362579296599

[root@k8s-cluster241 k8s]#

温馨提示:

"10.200.0.1"为咱们的svc网段的第一个地址,您需要根据自己的场景稍作修改。

"10.0.0.240"是负载均衡器的VIP地址。

"kubernetes,...,kubernetes.default.svc.zhubl.xyz"对应的是apiServer的svc解析的A记录。

"10.0.0.41,...,10.0.0.43"对应的是K8S集群的地址。

聚合证书的作用就是让第三方组件(比如metrics-server等)能够拿这个证书文件和apiServer进行通信。

[root@k8s-cluster241 k8s]# cat > front-proxy-ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

}

}

EOF

[root@k8s-cluster241 k8s]# cfssl gencert -initca front-proxy-ca-csr.json | cfssljson -bare /zhu/certs/k8s/front-proxy-ca

203480904066750632929259009320863977312911655916

[root@k8s-cluster241 k8s]#

[root@k8s-cluster241 k8s]# ll /zhu/certs/k8s/front-proxy-ca*

[root@k8s-cluster241 k8s]# cat > front-proxy-client-csr.json <<EOF

{

"CN": "front-proxy-client",

"key": {

"algo": "rsa",

"size": 2048

}

}

EOF

[root@k8s-cluster241 k8s]# cfssl gencert \

-ca=/zhu/certs/k8s/front-proxy-ca.pem \

-ca-key=/zhu/certs/k8s/front-proxy-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

front-proxy-client-csr.json | cfssljson -bare /zhu/certs/k8s/front-proxy-client

17921755826286501095019668749952373587673370774

[root@k8s-cluster241 k8s]# cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-proxy",

"OU": "Kubernetes-manual"

}

]

}

EOF

[root@k8s-cluster241 k8s]# cfssl gencert \

-ca=/zhu/certs/k8s/k8s-ca.pem \

-ca-key=/zhu/certs/k8s/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

kube-proxy-csr.json | cfssljson -bare /zhu/certs/k8s/kube-proxy

606358852546492388835885174100347810303628203807

[root@k8s-cluster241 k8s]# cat > controller-manager-csr.json <<EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "Kubernetes-manual"

}

]

}

EOF

[root@k8s-cluster241 k8s]# cfssl gencert \

-ca=/zhu/certs/k8s/k8s-ca.pem \

-ca-key=/zhu/certs/k8s/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

controller-manager-csr.json | cfssljson -bare /zhu/certs/k8s/controller-manager

143464706820092121078425752475307984345772978229

[root@k8s-cluster241 k8s]# cat > scheduler-csr.json <<EOF

{

"CN": "system:kube-scheduler",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "Kubernetes-manual"

}

]

}

EOF

[root@k8s-cluster241 k8s]# cfssl gencert \

-ca=/zhu/certs/k8s/k8s-ca.pem \

-ca-key=/zhu/certs/k8s/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

scheduler-csr.json | cfssljson -bare /zhu/certs/k8s/scheduler

565724523217634157420536823530210966412699610621

[root@k8s-cluster241 k8s]# cat > admin-csr.json <<EOF

{

"CN": "admin",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "Kubernetes-manual"

}

]

}

EOF

[root@k8s-cluster241 k8s]# cfssl gencert \

-ca=/zhu/certs/k8s/k8s-ca.pem \

-ca-key=/zhu/certs/k8s/k8s-ca-key.pem \

-config=k8s-ca-config.json \

-profile=kubernetes \

admin-csr.json | cfssljson -bare /zhu/certs/k8s/admin

api-server和controller manager组件可以基于该私钥签署所颁发的ID令牌(token)。

[root@k8s-cluster241 k8s]# openssl genrsa -out /zhu/certs/k8s/sa.key 2048

[root@k8s-cluster241 k8s]# openssl rsa -in /zhu/certs/k8s/sa.key -pubout -out /zhu/certs/k8s/sa.pub

writing RSA key

[root@k8s-cluster241 k8s]# ll /zhu/certs/k8s/sa*

[root@k8s-cluster241 k8s]# mkdir -pv /zhu/certs/kubeconfig

mkdir: created directory '/zhu/certs/kubeconfig'

[root@k8s-cluster241 k8s]#

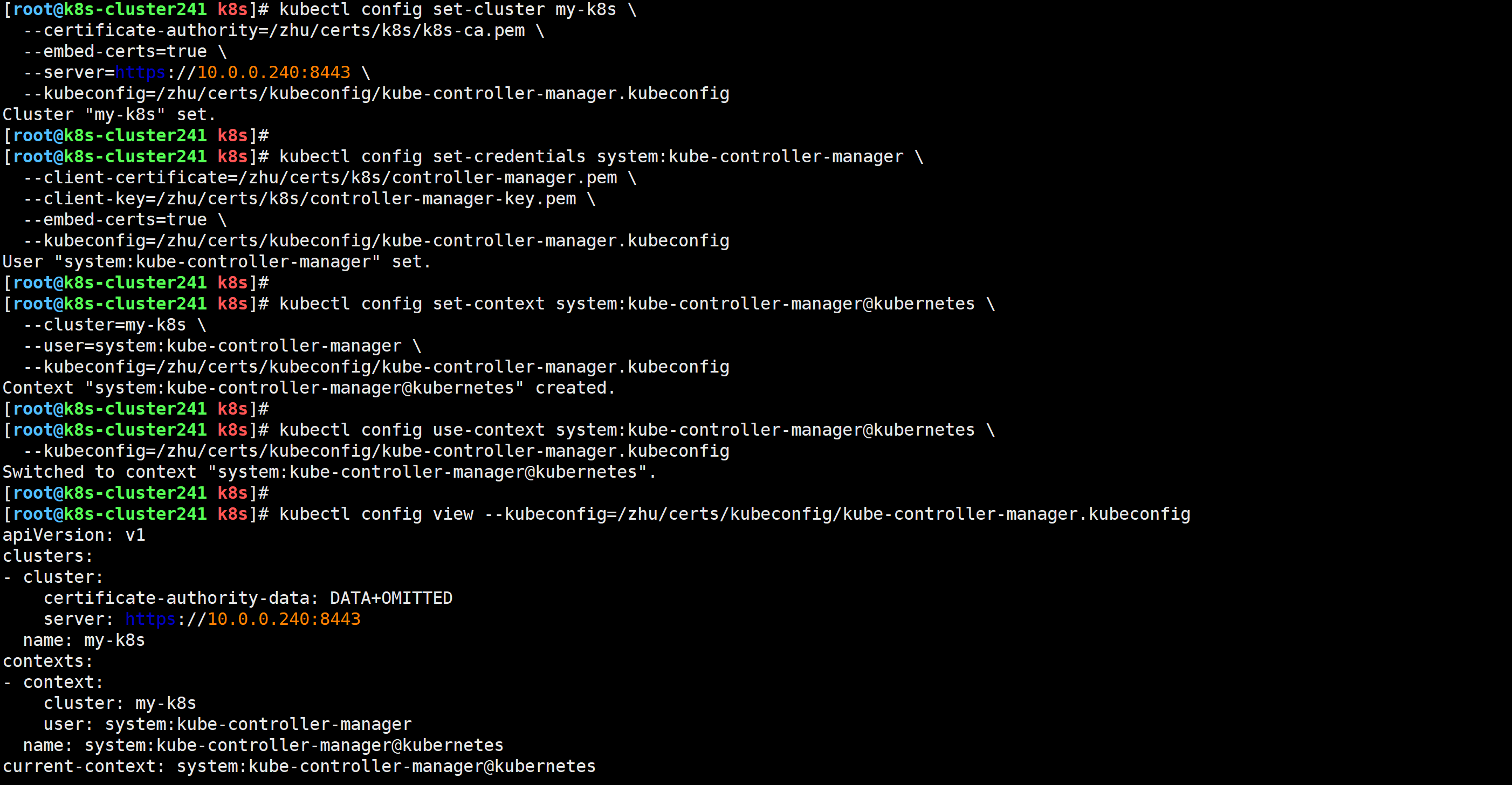

[root@k8s-cluster241 k8s]# kubectl config set-cluster my-k8s \

--certificate-authority=/zhu/certs/k8s/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.240:8443 \

--kubeconfig=/zhu/certs/kubeconfig/kube-controller-manager.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config set-credentials system:kube-controller-manager \

--client-certificate=/zhu/certs/k8s/controller-manager.pem \

--client-key=/zhu/certs/k8s/controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=/zhu/certs/kubeconfig/kube-controller-manager.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config set-context system:kube-controller-manager@kubernetes \

--cluster=my-k8s \

--user=system:kube-controller-manager \

--kubeconfig=/zhu/certs/kubeconfig/kube-controller-manager.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config use-context system:kube-controller-manager@kubernetes \

--kubeconfig=/zhu/certs/kubeconfig/kube-controller-manager.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config view --kubeconfig=/zhu/certs/kubeconfig/kube-controller-manager.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: DATA+OMITTED

server: https://10.0.0.240:8443

name: my-k8s

contexts:

- context:

cluster: my-k8s

user: system:kube-controller-manager

name: system:kube-controller-manager@kubernetes

current-context: system:kube-controller-manager@kubernetes

kind: Config

preferences: {}

users:

- name: system:kube-controller-manager

user:

client-certificate-data: DATA+OMITTED

client-key-data: DATA+OMITTED

[root@k8s-cluster241 k8s]#

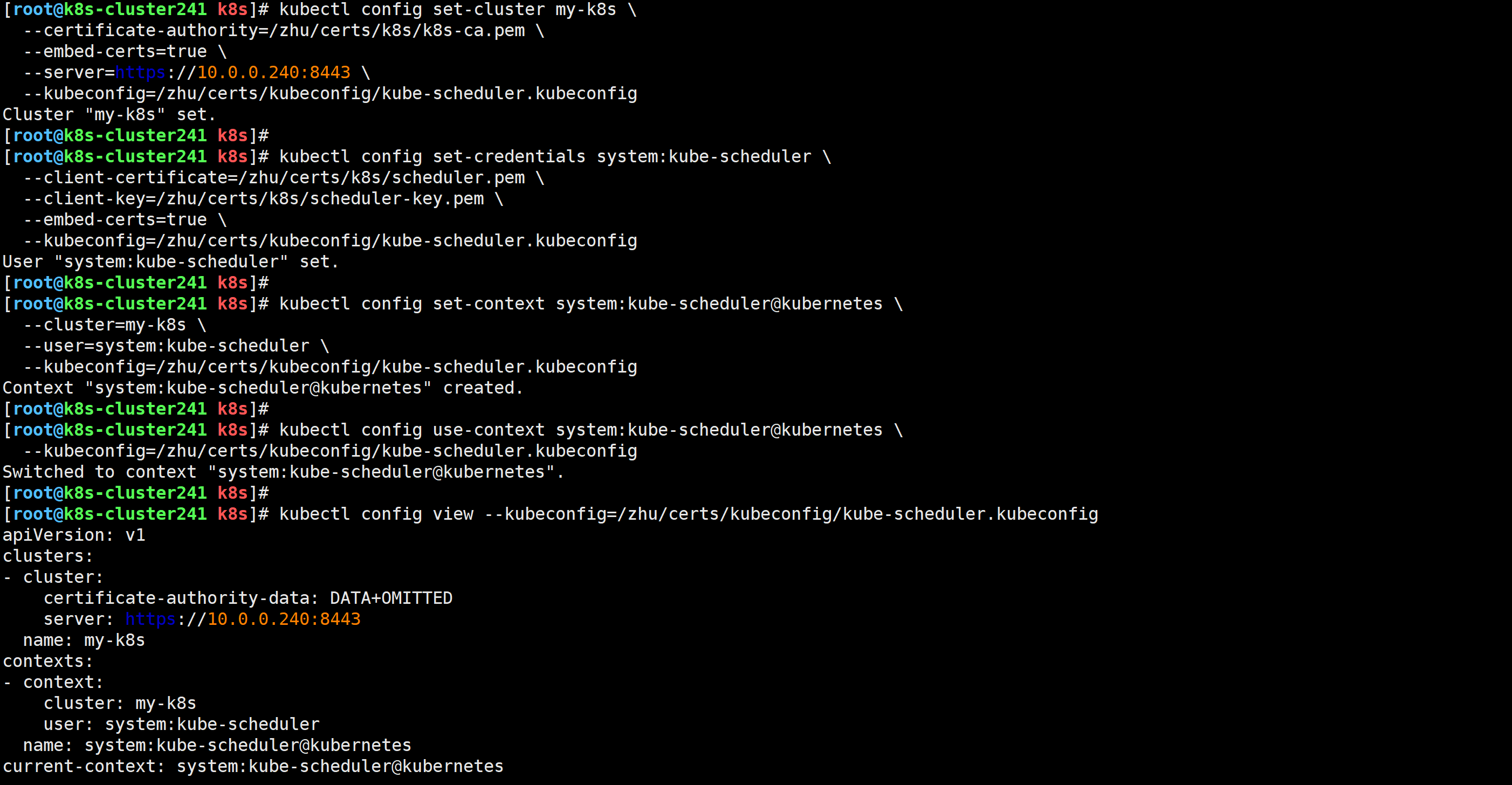

[root@k8s-cluster241 k8s]# kubectl config set-cluster my-k8s \

--certificate-authority=/zhu/certs/k8s/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.240:8443 \

--kubeconfig=/zhu/certs/kubeconfig/kube-scheduler.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config set-credentials system:kube-scheduler \

--client-certificate=/zhu/certs/k8s/scheduler.pem \

--client-key=/zhu/certs/k8s/scheduler-key.pem \

--embed-certs=true \

--kubeconfig=/zhu/certs/kubeconfig/kube-scheduler.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config set-context system:kube-scheduler@kubernetes \

--cluster=my-k8s \

--user=system:kube-scheduler \

--kubeconfig=/zhu/certs/kubeconfig/kube-scheduler.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config use-context system:kube-scheduler@kubernetes \

--kubeconfig=/zhu/certs/kubeconfig/kube-scheduler.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config view --kubeconfig=/zhu/certs/kubeconfig/kube-scheduler.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: DATA+OMITTED

server: https://10.0.0.240:8443

name: my-k8s

contexts:

- context:

cluster: my-k8s

user: system:kube-scheduler

name: system:kube-scheduler@kubernetes

current-context: system:kube-scheduler@kubernetes

kind: Config

preferences: {}

users:

- name: system:kube-scheduler

user:

client-certificate-data: DATA+OMITTED

client-key-data: DATA+OMITTED

[root@k8s-cluster241 k8s]#

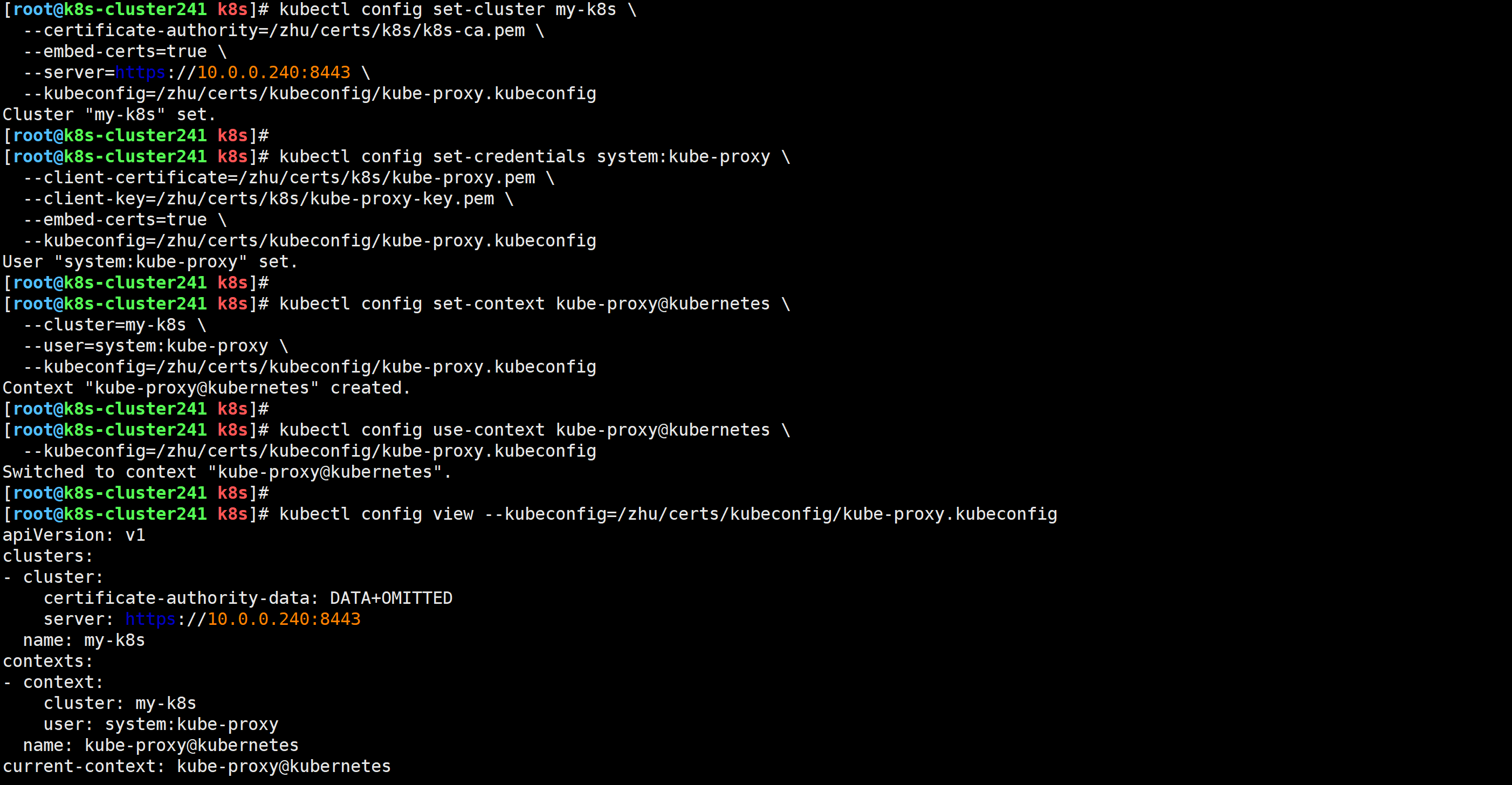

[root@k8s-cluster241 k8s]# kubectl config set-cluster my-k8s \

--certificate-authority=/zhu/certs/k8s/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.240:8443 \

--kubeconfig=/zhu/certs/kubeconfig/kube-proxy.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config set-credentials system:kube-proxy \

--client-certificate=/zhu/certs/k8s/kube-proxy.pem \

--client-key=/zhu/certs/k8s/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=/zhu/certs/kubeconfig/kube-proxy.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config set-context kube-proxy@kubernetes \

--cluster=my-k8s \

--user=system:kube-proxy \

--kubeconfig=/zhu/certs/kubeconfig/kube-proxy.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config use-context kube-proxy@kubernetes \

--kubeconfig=/zhu/certs/kubeconfig/kube-proxy.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config view --kubeconfig=/zhu/certs/kubeconfig/kube-proxy.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: DATA+OMITTED

server: https://10.0.0.240:8443

name: my-k8s

contexts:

- context:

cluster: my-k8s

user: system:kube-proxy

name: kube-proxy@kubernetes

current-context: kube-proxy@kubernetes

kind: Config

preferences: {}

users:

- name: system:kube-proxy

user:

client-certificate-data: DATA+OMITTED

client-key-data: DATA+OMITTED

[root@k8s-cluster241 k8s]#

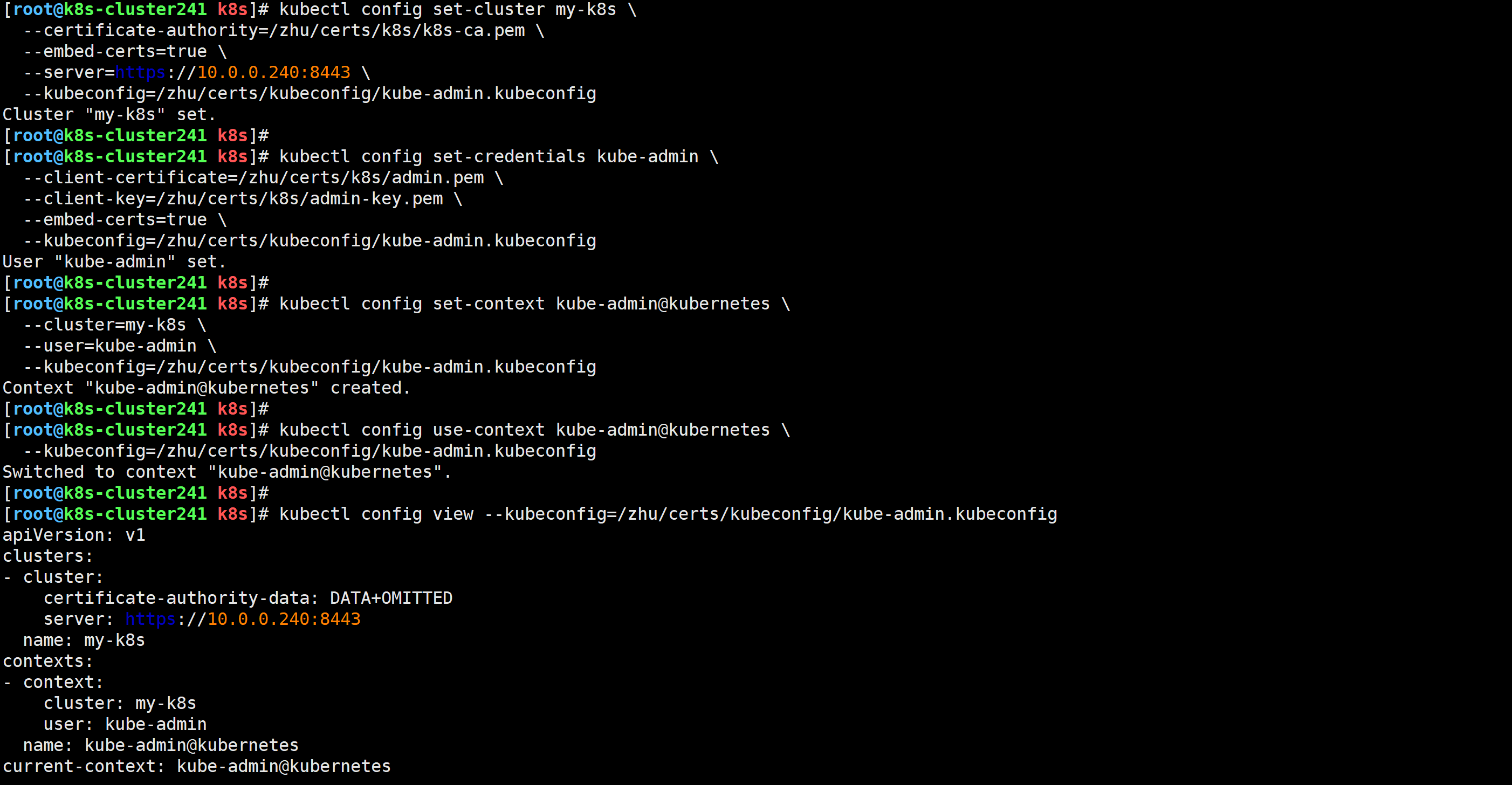

[root@k8s-cluster241 k8s]# kubectl config set-cluster my-k8s \

--certificate-authority=/zhu/certs/k8s/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.240:8443 \

--kubeconfig=/zhu/certs/kubeconfig/kube-admin.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config set-credentials kube-admin \

--client-certificate=/zhu/certs/k8s/admin.pem \

--client-key=/zhu/certs/k8s/admin-key.pem \

--embed-certs=true \

--kubeconfig=/zhu/certs/kubeconfig/kube-admin.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config set-context kube-admin@kubernetes \

--cluster=my-k8s \

--user=kube-admin \

--kubeconfig=/zhu/certs/kubeconfig/kube-admin.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config use-context kube-admin@kubernetes \

--kubeconfig=/zhu/certs/kubeconfig/kube-admin.kubeconfig

[root@k8s-cluster241 k8s]# kubectl config view --kubeconfig=/zhu/certs/kubeconfig/kube-admin.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: DATA+OMITTED

server: https://10.0.0.240:8443

name: my-k8s

contexts:

- context:

cluster: my-k8s

user: kube-admin

name: kube-admin@kubernetes

current-context: kube-admin@kubernetes

kind: Config

preferences: {}

users:

- name: kube-admin

user:

client-certificate-data: DATA+OMITTED

client-key-data: DATA+OMITTED

[root@k8s-cluster241 k8s]#

如果controller manager,scheduler和ApiServer不在同一个节点,则证书可以不用拷贝,因为证书已经写入了kubeconfig文件

[root@k8s-cluster241 k8s]# data_rsync.sh /zhu/certs/kubeconfig/

===== rsyncing k8s-cluster242: kubeconfig =====

命令执行成功!

===== rsyncing k8s-cluster243: kubeconfig =====

命令执行成功!

[root@k8s-cluster241 k8s]#

由于我们的环境api-server和controller manager,scheduler并没有单独部署,因此所有节点都得有证书,以供api-server启动时使用。

[root@k8s-cluster241 k8s]# data_rsync.sh /zhu/certs/k8s/

===== rsyncing k8s-cluster242: k8s =====

命令执行成功!

===== rsyncing k8s-cluster243: k8s =====

命令执行成功!

[root@k8s-cluster241 k8s]#

[root@k8s-cluster241 k8s]# tree /zhu/

/zhu/

├── certs

│ ├── etcd

│ │ ├── etcd-ca.csr

│ │ ├── etcd-ca-key.pem

│ │ ├── etcd-ca.pem

│ │ ├── etcd-server.csr

│ │ ├── etcd-server-key.pem

│ │ └── etcd-server.pem

│ ├── k8s

│ │ ├── admin.csr

│ │ ├── admin-key.pem

│ │ ├── admin.pem

│ │ ├── apiserver.csr

│ │ ├── apiserver-key.pem

│ │ ├── apiserver.pem

│ │ ├── controller-manager.csr

│ │ ├── controller-manager-key.pem

│ │ ├── controller-manager.pem

│ │ ├── front-proxy-ca.csr

│ │ ├── front-proxy-ca-key.pem

│ │ ├── front-proxy-ca.pem

│ │ ├── front-proxy-client.csr

│ │ ├── front-proxy-client-key.pem

│ │ ├── front-proxy-client.pem

│ │ ├── k8s-ca.csr

│ │ ├── k8s-ca-key.pem

│ │ ├── k8s-ca.pem

│ │ ├── kube-proxy.csr

│ │ ├── kube-proxy-key.pem

│ │ ├── kube-proxy.pem

│ │ ├── sa.key

│ │ ├── sa.pub

│ │ ├── scheduler.csr

│ │ ├── scheduler-key.pem

│ │ └── scheduler.pem

│ └── kubeconfig

│ ├── kube-admin.kubeconfig

│ ├── kube-controller-manager.kubeconfig

│ ├── kube-proxy.kubeconfig

│ └── kube-scheduler.kubeconfig

├── pki

│ ├── etcd

│ │ ├── ca-config.json

│ │ ├── etcd-ca-csr.json

│ │ └── etcd-csr.json

│ └── k8s

│ ├── admin-csr.json

│ ├── apiserver-csr.json

│ ├── controller-manager-csr.json

│ ├── front-proxy-ca-csr.json

│ ├── front-proxy-client-csr.json

│ ├── k8s-ca-config.json

│ ├── k8s-ca-csr.json

│ ├── kube-proxy-csr.json

│ └── scheduler-csr.json

└── softwares

└── etcd

└── etcd.config.yml

9 directories, 49 files

[root@k8s-cluster241 k8s]#

[root@k8s-cluster242 ~]# tree /zhu/

/zhu/

├── certs

│ ├── etcd

│ │ ├── etcd-ca.csr

│ │ ├── etcd-ca-key.pem

│ │ ├── etcd-ca.pem

│ │ ├── etcd-server.csr

│ │ ├── etcd-server-key.pem

│ │ └── etcd-server.pem

│ ├── k8s

│ │ ├── admin.csr

│ │ ├── admin-key.pem

│ │ ├── admin.pem

│ │ ├── apiserver.csr

│ │ ├── apiserver-key.pem

│ │ ├── apiserver.pem

│ │ ├── controller-manager.csr

│ │ ├── controller-manager-key.pem

│ │ ├── controller-manager.pem

│ │ ├── front-proxy-ca.csr

│ │ ├── front-proxy-ca-key.pem

│ │ ├── front-proxy-ca.pem

│ │ ├── front-proxy-client.csr

│ │ ├── front-proxy-client-key.pem

│ │ ├── front-proxy-client.pem

│ │ ├── k8s-ca.csr

│ │ ├── k8s-ca-key.pem

│ │ ├── k8s-ca.pem

│ │ ├── kube-proxy.csr

│ │ ├── kube-proxy-key.pem

│ │ ├── kube-proxy.pem

│ │ ├── sa.key

│ │ ├── sa.pub

│ │ ├── scheduler.csr

│ │ ├── scheduler-key.pem

│ │ └── scheduler.pem

│ └── kubeconfig

│ ├── kube-admin.kubeconfig

│ ├── kube-controller-manager.kubeconfig

│ ├── kube-proxy.kubeconfig

│ └── kube-scheduler.kubeconfig

└── softwares

└── etcd

└── etcd.config.yml

6 directories, 37 files

[root@k8s-cluster242 ~]#

[root@k8s-cluster243 ~]# tree /zhu/

/zhu/

├── certs

│ ├── etcd

│ │ ├── etcd-ca.csr

│ │ ├── etcd-ca-key.pem

│ │ ├── etcd-ca.pem

│ │ ├── etcd-server.csr

│ │ ├── etcd-server-key.pem

│ │ └── etcd-server.pem

│ ├── k8s

│ │ ├── admin.csr

│ │ ├── admin-key.pem

│ │ ├── admin.pem

│ │ ├── apiserver.csr

│ │ ├── apiserver-key.pem

│ │ ├── apiserver.pem

│ │ ├── controller-manager.csr

│ │ ├── controller-manager-key.pem

│ │ ├── controller-manager.pem

│ │ ├── front-proxy-ca.csr

│ │ ├── front-proxy-ca-key.pem

│ │ ├── front-proxy-ca.pem

│ │ ├── front-proxy-client.csr

│ │ ├── front-proxy-client-key.pem

│ │ ├── front-proxy-client.pem

│ │ ├── k8s-ca.csr

│ │ ├── k8s-ca-key.pem

│ │ ├── k8s-ca.pem

│ │ ├── kube-proxy.csr

│ │ ├── kube-proxy-key.pem

│ │ ├── kube-proxy.pem

│ │ ├── sa.key

│ │ ├── sa.pub

│ │ ├── scheduler.csr

│ │ ├── scheduler-key.pem

│ │ └── scheduler.pem

│ └── kubeconfig

│ ├── kube-admin.kubeconfig

│ ├── kube-controller-manager.kubeconfig

│ ├── kube-proxy.kubeconfig

│ └── kube-scheduler.kubeconfig

└── softwares

└── etcd

└── etcd.config.yml

6 directories, 37 files

[root@k8s-cluster243 ~]#

温馨提示:

- "--advertise-address"是对应的master节点的IP地址;

- "--service-cluster-ip-range"对应的是svc的网段

- "--service-node-port-range"对应的是svc的NodePort端口范围;

- "--etcd-servers"指定的是etcd集群地址

配置文件参考链接:

https://kubernetes.io/zh-cn/docs/reference/command-line-tools-reference/kube-apiserver/

确保没有数据,如果如果有也没有关系,可跳过。

[root@k8s-cluster241 ~]# etcdctl del "" --prefix

0

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# etcdctl get "" --prefix --keys-only

[root@k8s-cluster241 k8s]# cat /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--requestheader-allowed-names=front-proxy-client \

--v=2 \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--allow_privileged=true \

--advertise-address=10.0.0.241 \

--service-cluster-ip-range=10.200.0.0/16 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.241:2379,https://10.0.0.242:2379,https://10.0.0.243:2379 \

--etcd-cafile=/zhu/certs/etcd/etcd-ca.pem \

--etcd-certfile=/zhu/certs/etcd/etcd-server.pem \

--etcd-keyfile=/zhu/certs/etcd/etcd-server-key.pem \

--client-ca-file=/zhu/certs/k8s/k8s-ca.pem \

--tls-cert-file=/zhu/certs/k8s/apiserver.pem \

--tls-private-key-file=/zhu/certs/k8s/apiserver-key.pem \

--kubelet-client-certificate=/zhu/certs/k8s/apiserver.pem \

--kubelet-client-key=/zhu/certs/k8s/apiserver-key.pem \

--service-account-key-file=/zhu/certs/k8s/sa.pub \

--service-account-signing-key-file=/zhu/certs/k8s/sa.key \

--service-account-issuer=https://kubernetes.default.svc.zhubl.xyz \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/zhu/certs/k8s/front-proxy-ca.pem \

--proxy-client-cert-file=/zhu/certs/k8s/front-proxy-client.pem \

--proxy-client-key-file=/zhu/certs/k8s/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

systemctl daemon-reload && systemctl enable --now kube-apiserver

systemctl status kube-apiserver

ss -ntl | grep 6443

发现启动api-server后,该组件就直接会和etcd进行数据交互。

[root@k8s-cluster241 k8s]# etcdctl get "" --prefix --keys-only | head

/registry/apiregistration.k8s.io/apiservices/v1.

/registry/apiregistration.k8s.io/apiservices/v1.admissionregistration.k8s.io

/registry/apiregistration.k8s.io/apiservices/v1.apiextensions.k8s.io

/registry/apiregistration.k8s.io/apiservices/v1.apps

/registry/apiregistration.k8s.io/apiservices/v1.authentication.k8s.io

[root@k8s-cluster241 k8s]# etcdctl get "" --prefix --keys-only | wc -l

370

[root@k8s-cluster241 k8s]#

温馨提示:

如果该节点api-server无法启动,请检查日志,尤其是证书文件需要api-server启动时要直接加载etcd,sa,api-server,客户端认证等相关证书。

如果证书没有同步,可以执行命令'data_rsync.sh /zhu/certs/k8s/'自动进行同步。

[root@k8s-cluster242 ~]# cat /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--requestheader-allowed-names=front-proxy-client \

--v=2 \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--allow_privileged=true \

--advertise-address=10.0.0.242 \

--service-cluster-ip-range=10.200.0.0/16 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.241:2379,https://10.0.0.242:2379,https://10.0.0.243:2379 \

--etcd-cafile=/zhu/certs/etcd/etcd-ca.pem \

--etcd-certfile=/zhu/certs/etcd/etcd-server.pem \

--etcd-keyfile=/zhu/certs/etcd/etcd-server-key.pem \

--client-ca-file=/zhu/certs/k8s/k8s-ca.pem \

--tls-cert-file=/zhu/certs/k8s/apiserver.pem \

--tls-private-key-file=/zhu/certs/k8s/apiserver-key.pem \

--kubelet-client-certificate=/zhu/certs/k8s/apiserver.pem \

--kubelet-client-key=/zhu/certs/k8s/apiserver-key.pem \

--service-account-key-file=/zhu/certs/k8s/sa.pub \

--service-account-signing-key-file=/zhu/certs/k8s/sa.key \

--service-account-issuer=https://kubernetes.default.svc.zhubl.xyz \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/zhu/certs/k8s/front-proxy-ca.pem \

--proxy-client-cert-file=/zhu/certs/k8s/front-proxy-client.pem \

--proxy-client-key-file=/zhu/certs/k8s/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

[root@k8s-cluster242 ~]#

systemctl daemon-reload && systemctl enable --now kube-apiserver

systemctl status kube-apiserver

ss -ntl | grep 6443

[root@k8s-cluster243 ~]# cat /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--requestheader-allowed-names=front-proxy-client \

--v=2 \

--bind-address=0.0.0.0 \

--secure-port=6443 \

--allow_privileged=true \

--advertise-address=10.0.0.243 \

--service-cluster-ip-range=10.200.0.0/16 \

--service-node-port-range=3000-50000 \

--etcd-servers=https://10.0.0.241:2379,https://10.0.0.242:2379,https://10.0.0.243:2379 \

--etcd-cafile=/zhu/certs/etcd/etcd-ca.pem \

--etcd-certfile=/zhu/certs/etcd/etcd-server.pem \

--etcd-keyfile=/zhu/certs/etcd/etcd-server-key.pem \

--client-ca-file=/zhu/certs/k8s/k8s-ca.pem \

--tls-cert-file=/zhu/certs/k8s/apiserver.pem \

--tls-private-key-file=/zhu/certs/k8s/apiserver-key.pem \

--kubelet-client-certificate=/zhu/certs/k8s/apiserver.pem \

--kubelet-client-key=/zhu/certs/k8s/apiserver-key.pem \

--service-account-key-file=/zhu/certs/k8s/sa.pub \

--service-account-signing-key-file=/zhu/certs/k8s/sa.key \

--service-account-issuer=https://kubernetes.default.svc.zhubl.xyz \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,NodeRestriction,ResourceQuota \

--authorization-mode=Node,RBAC \

--enable-bootstrap-token-auth=true \

--requestheader-client-ca-file=/zhu/certs/k8s/front-proxy-ca.pem \

--proxy-client-cert-file=/zhu/certs/k8s/front-proxy-client.pem \

--proxy-client-key-file=/zhu/certs/k8s/front-proxy-client-key.pem \

--requestheader-allowed-names=aggregator \

--requestheader-group-headers=X-Remote-Group \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-username-headers=X-Remote-User

Restart=on-failure

RestartSec=10s

LimitNOFILE=65535

[Install]

WantedBy=multi-user.target

systemctl daemon-reload && systemctl enable --now kube-apiserver

systemctl status kube-apiserver

ss -ntl | grep 6443

[root@k8s-cluster241 ~]# etcdctl get "" --prefix --keys-only | wc -l

378

[root@k8s-cluster241 ~]#

温馨提示:

- "--cluster-cidr"是Pod的网段地址,我们可以自行修改。

配置文件参考链接:

https://kubernetes.io/zh-cn/docs/reference/command-line-tools-reference/kube-controller-manager/

所有节点的controller-manager组件配置文件相同(前提是证书文件存放的位置也要相同)

cat > /usr/lib/systemd/system/kube-controller-manager.service << 'EOF'

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-controller-manager \

--v=2 \

--root-ca-file=/zhu/certs/k8s/k8s-ca.pem \

--cluster-signing-cert-file=/zhu/certs/k8s/k8s-ca.pem \

--cluster-signing-key-file=/zhu/certs/k8s/k8s-ca-key.pem \

--service-account-private-key-file=/zhu/certs/k8s/sa.key \

--kubeconfig=/zhu/certs/kubeconfig/kube-controller-manager.kubeconfig \

--leader-elect=true \

--use-service-account-credentials=true \

--node-monitor-grace-period=40s \

--node-monitor-period=5s \

--controllers=*,bootstrapsigner,tokencleaner \

--allocate-node-cidrs=true \

--cluster-cidr=10.100.0.0/16 \

--requestheader-client-ca-file=/zhu/certs/k8s/front-proxy-ca.pem \

--node-cidr-mask-size=24

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable --now kube-controller-manager

systemctl status kube-controller-manager

ss -ntl | grep 10257

配置文件参考链接:

https://kubernetes.io/zh-cn/docs/reference/command-line-tools-reference/kube-scheduler/

所有节点的Scheduler组件配置文件相同: (前提是证书文件存放的位置也要相同!)

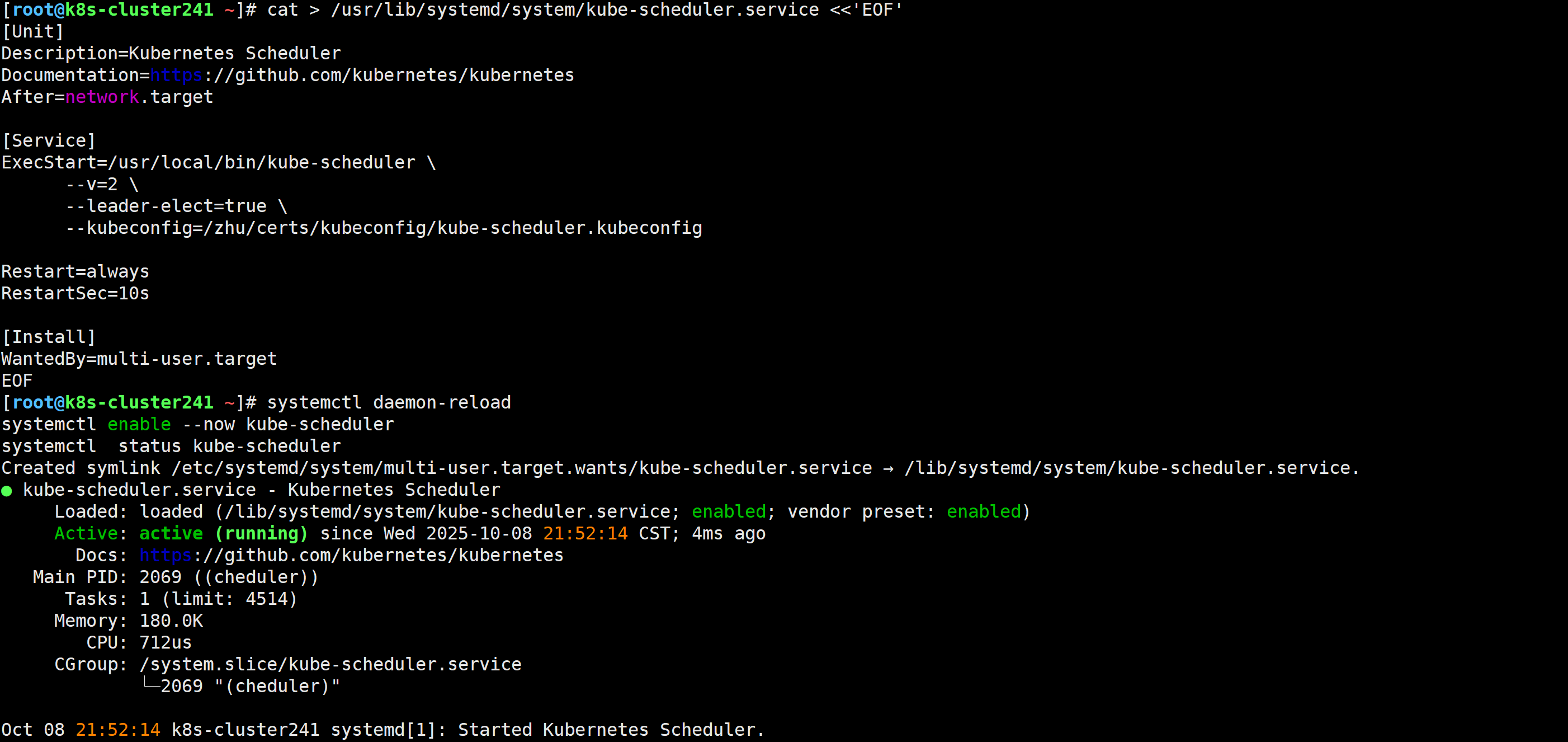

cat > /usr/lib/systemd/system/kube-scheduler.service <<'EOF'

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-scheduler \

--v=2 \

--leader-elect=true \

--kubeconfig=/zhu/certs/kubeconfig/kube-scheduler.kubeconfig

Restart=always

RestartSec=10s

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable --now kube-scheduler

systemctl status kube-scheduler

ss -ntl | grep 10259

温馨提示:

- 对于高可用组件,其实我们也可以单独找两台虚拟机来部署,但我为了节省2台机器,就直接在master节点复用了。

- 如果在云上安装K8S则无安装高可用组件了,毕竟公有云大部分都是不支持keepalived的,可以直接使用云产品,比如阿里的"SLB",腾讯的"ELB"等SAAS产品;

- 推荐使用ELB,SLB有回环的问题,也就是SLB代理的服务器不能反向访问SLB,但是腾讯云修复了这个问题;

apt update

apt-get -y install keepalived haproxy

温馨提示:

- haproxy的负载均衡器监听地址我配置是8443,你可以修改为其他端口,haproxy会用来反向代理各个master组件的地址;

- 如果你真的修改一定注意上面的证书配置的kubeconfig文件,也要一起修改,否则就会出现链接集群失败的问题;

cp /etc/haproxy/haproxy.cfg{,`date +%F`}

cat > /etc/haproxy/haproxy.cfg <<'EOF'

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-haproxy

bind *:9999

mode http

option httplog

monitor-uri /ruok

frontend zhu-k8s

bind 0.0.0.0:8443

bind 127.0.0.1:8443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend zhu-k8s

backend zhu-k8s

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server k8s-cluster241 10.0.0.241:6443 check

server k8s-cluster242 10.0.0.242:6443 check

server k8s-cluster243 10.0.0.243:6443 check

EOF

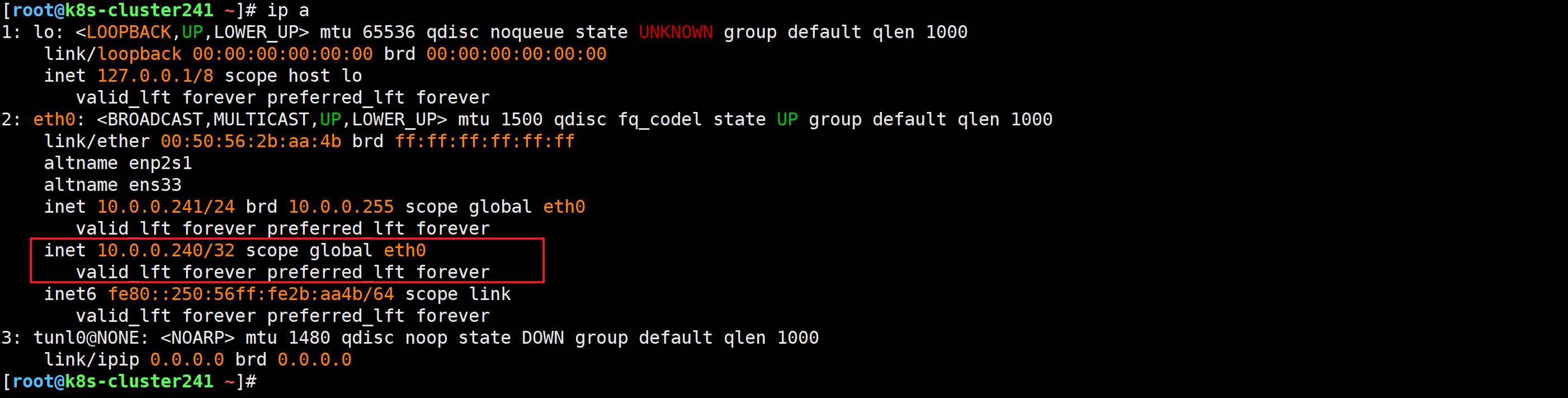

温馨提示:

- 注意"interface"字段为你的物理网卡的名称,如果你的网卡是ens33,请将"eth0"修改为"ens33";

- 注意"mcast_src_ip"各master节点的配置均不相同,修改根据实际环境进行修改;

- 注意"virtual_ipaddress"指定的是负载均衡器的VIP地址,这个地址也要和kubeconfig文件的Apiserver地址要一致;

- 注意"script"字段的脚本用于检测后端的apiServer是否健康;

- 注意"router_id"字段为节点ip,master每个节点配置自己的IP

[root@k8s-cluster241 ~]# cat > /etc/keepalived/keepalived.conf <<'EOF'

! Configuration File for keepalived

global_defs {

router_id 10.0.0.241

}

vrrp_script chk_lb {

script "/etc/keepalived/check_port.sh 8443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.0.0.241

nopreempt

authentication {

auth_type PASS

auth_pass k8s

}

track_script {

chk_lb

}

virtual_ipaddress {

10.0.0.240

}

}

EOF

[root@k8s-cluster242 ~]# cat > /etc/keepalived/keepalived.conf <<EOF

! Configuration File for keepalived

global_defs {

router_id 10.0.0.242

}

vrrp_script chk_lb {

script "/etc/keepalived/check_port.sh 8443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.0.0.242

nopreempt

authentication {

auth_type PASS

auth_pass k8s

}

track_script {

chk_lb

}

virtual_ipaddress {

10.0.0.240

}

}

EOF

[root@k8s-cluster243 ~]# cat > /etc/keepalived/keepalived.conf <<EOF

! Configuration File for keepalived

global_defs {

router_id 10.0.0.243

}

vrrp_script chk_lb {

script "/etc/keepalived/check_port.sh 8443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.0.0.243

nopreempt

authentication {

auth_type PASS

auth_pass k8s

}

track_script {

chk_lb

}

virtual_ipaddress {

10.0.0.240

}

}

EOF

cat > /etc/keepalived/check_port.sh <<'EOF'

#!/bin/bash

CHK_PORT=$1

if [ -n "$CHK_PORT" ];then

PORT_PROCESS=`ss -lt|grep $CHK_PORT|wc -l`

if [ $PORT_PROCESS -eq 0 ];then

echo "Port $CHK_PORT Is Not Used,End."

systemctl stop keepalived

fi

else

echo "Check Port Cant Be Empty!"

fi

EOF

chmod +x /etc/keepalived/check_port.sh

systemctl enable --now haproxy

systemctl restart haproxy

systemctl status haproxy

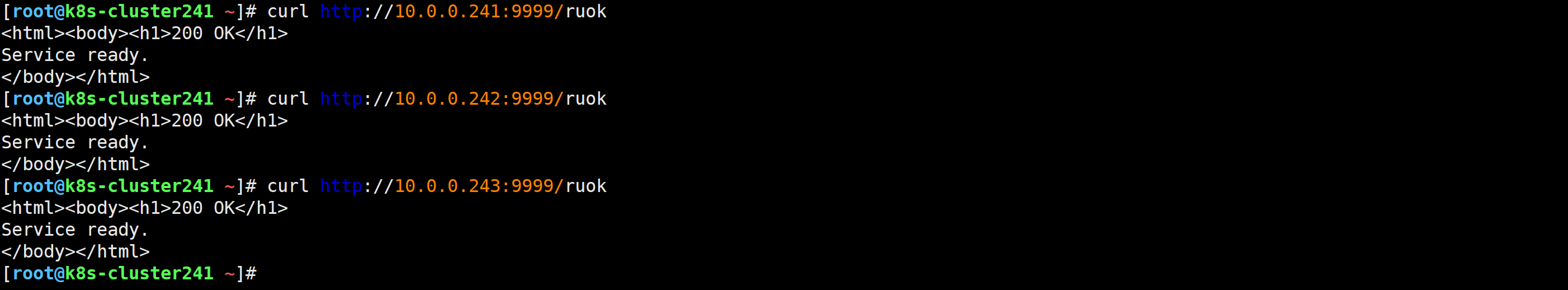

ss -ntl | egrep "8443|9999"

curl http://10.0.0.241:9999/ruok

systemctl daemon-reload

systemctl enable --now keepalived

systemctl status keepalived

ip a

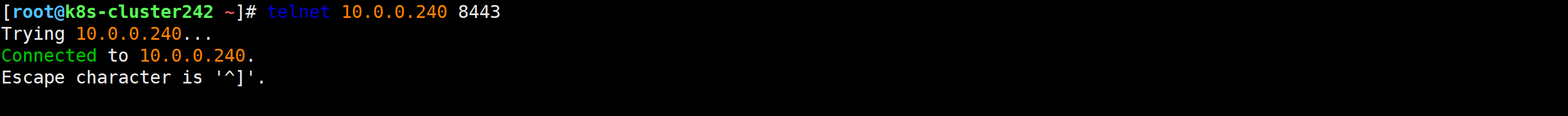

[root@k8s-cluster241 ~]# telnet 10.0.0.240 8443

systemctl stop haproxy.service

ip a

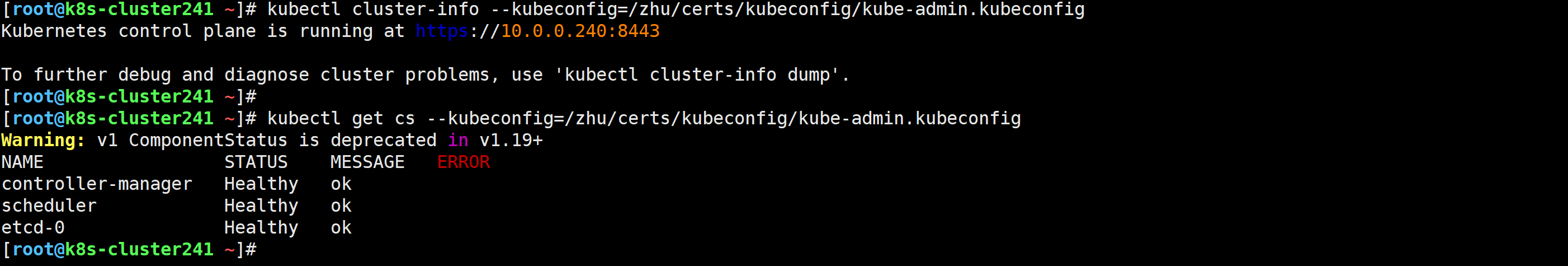

[root@k8s-cluster241 ~]# kubectl cluster-info --kubeconfig=/zhu/certs/kubeconfig/kube-admin.kubeconfig

Kubernetes control plane is running at https://10.0.0.240:8443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kubectl get cs --kubeconfig=/zhu/certs/kubeconfig/kube-admin.kubeconfig

mkdir -p $HOME/.kube

cp -i /zhu/certs/kubeconfig/kube-admin.kubeconfig $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-cluster241 ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy ok

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kubectl cluster-info

Kubernetes control plane is running at https://10.0.0.240:8443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

[root@k8s-cluster241 ~]#

[root@k8s-cluster242 ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy ok

[root@k8s-cluster242 ~]#

[root@k8s-cluster243 ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy ok

[root@k8s-cluster243 ~]#

kubectl completion bash > ~/.kube/completion.bash.inc

echo source '$HOME/.kube/completion.bash.inc' >> ~/.bashrc

source ~/.bashrc

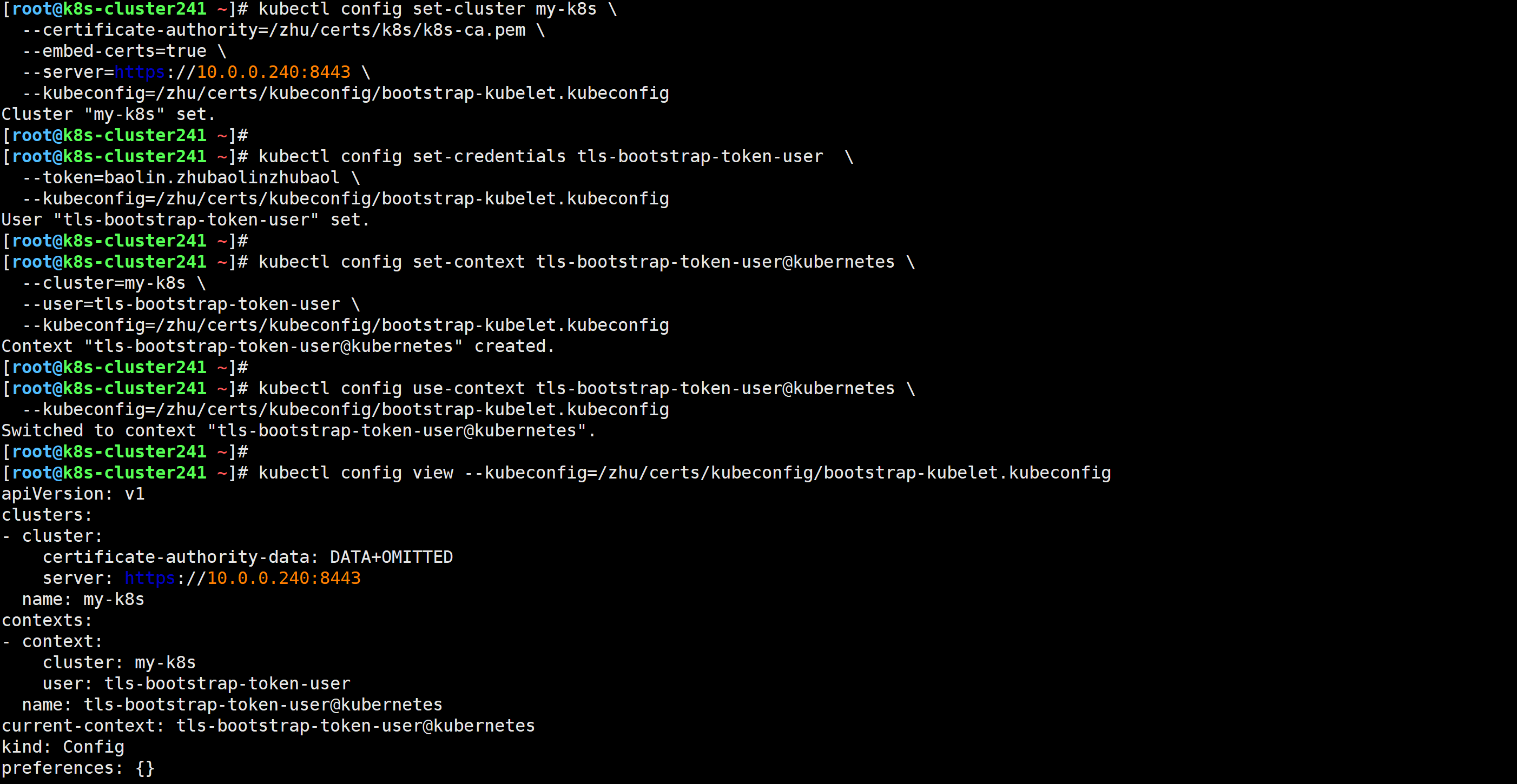

kubectl config set-cluster my-k8s \

--certificate-authority=/zhu/certs/k8s/k8s-ca.pem \

--embed-certs=true \

--server=https://10.0.0.240:8443 \

--kubeconfig=/zhu/certs/kubeconfig/bootstrap-kubelet.kubeconfig

kubectl config set-credentials tls-bootstrap-token-user \

--token=baolin.zhubaolinzhubaol \

--kubeconfig=/zhu/certs/kubeconfig/bootstrap-kubelet.kubeconfig

kubectl config set-context tls-bootstrap-token-user@kubernetes \

--cluster=my-k8s \

--user=tls-bootstrap-token-user \

--kubeconfig=/zhu/certs/kubeconfig/bootstrap-kubelet.kubeconfig

kubectl config use-context tls-bootstrap-token-user@kubernetes \

--kubeconfig=/zhu/certs/kubeconfig/bootstrap-kubelet.kubeconfig

kubectl config view --kubeconfig=/zhu/certs/kubeconfig/bootstrap-kubelet.kubeconfig

[root@k8s-cluster241 ~]# data_rsync.sh /zhu/certs/kubeconfig/bootstrap-kubelet.kubeconfig

===== rsyncing k8s-cluster242: bootstrap-kubelet.kubeconfig =====

命令执行成功!

===== rsyncing k8s-cluster243: bootstrap-kubelet.kubeconfig =====

命令执行成功!

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# cat bootstrap-secret.yaml

apiVersion: v1

kind: Secret

metadata:

name: bootstrap-token

namespace: kube-system

type: bootstrap.kubernetes.io/token

stringData:

description: "The default bootstrap token generated by 'kubelet '."

token-id: baolin

token-secret: zhubaolinzhubaol

usage-bootstrap-authentication: "true"

usage-bootstrap-signing: "true"

auth-extra-groups: system:bootstrappers:default-node-token,system:bootstrappers:worker,system:bootstrappers:ingress

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubelet-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node-bootstrapper

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-bootstrap

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:nodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:bootstrappers:default-node-token

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-autoapprove-certificate-rotation

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:certificates.k8s.io:certificatesigningrequests:selfnodeclient

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:nodes

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kube-apiserver

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# etcdctl get "/" --prefix --keys-only | wc -l

470

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kubectl apply -f bootstrap-secret.yaml

secret/bootstrap-token created

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

clusterrolebinding.rbac.authorization.k8s.io/node-autoapprove-bootstrap created

clusterrolebinding.rbac.authorization.k8s.io/node-autoapprove-certificate-rotation created

clusterrole.rbac.authorization.k8s.io/system:kube-apiserver-to-kubelet created

clusterrolebinding.rbac.authorization.k8s.io/system:kube-apiserver created

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# etcdctl get "/" --prefix --keys-only | wc -l

482

[root@k8s-cluster241 ~]#

温馨提示:

- 在"10-kubelet.con"文件中使用"--kubeconfig"指定的"kubelet.kubeconfig"文件并不存在,这个证书文件后期会自动生成;

- 对于"clusterDNS"是NDS地址,我们可以自定义,比如"10.200.0.254";

- “clusterDomain”对应的是域名信息,要和我们设计的集群保持一致,比如"zhubl.xyz";

- "10-kubelet.conf"文件中的"ExecStart="需要写2次,否则可能无法启动kubelet;

mkdir -p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/

cat > /etc/kubernetes/kubelet-conf.yml <<'EOF'

apiVersion: kubelet.config.k8s.io/v1beta1

kind: KubeletConfiguration

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /zhu/certs/k8s/k8s-ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

cgroupDriver: systemd

cgroupsPerQOS: true

clusterDNS:

- 10.200.0.254

clusterDomain: zhubl.xyz

containerLogMaxFiles: 5

containerLogMaxSize: 10Mi

contentType: application/vnd.kubernetes.protobuf

cpuCFSQuota: true

cpuManagerPolicy: none

cpuManagerReconcilePeriod: 10s

enableControllerAttachDetach: true

enableDebuggingHandlers: true

enforceNodeAllocatable:

- pods

eventBurst: 10

eventRecordQPS: 5

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

evictionPressureTransitionPeriod: 5m0s

failSwapOn: true

fileCheckFrequency: 20s

hairpinMode: promiscuous-bridge

healthzBindAddress: 127.0.0.1

healthzPort: 10248

httpCheckFrequency: 20s

imageGCHighThresholdPercent: 85

imageGCLowThresholdPercent: 80

imageMinimumGCAge: 2m0s

iptablesDropBit: 15

iptablesMasqueradeBit: 14

kubeAPIBurst: 10

kubeAPIQPS: 5

makeIPTablesUtilChains: true

maxOpenFiles: 1000000

maxPods: 110

nodeStatusUpdateFrequency: 10s

oomScoreAdj: -999

podPidsLimit: -1

registryBurst: 10

registryPullQPS: 5

resolvConf: /etc/kubernetes/resolv.conf

rotateCertificates: true

runtimeRequestTimeout: 2m0s

serializeImagePulls: true

staticPodPath: /etc/kubernetes/manifests

streamingConnectionIdleTimeout: 4h0m0s

syncFrequency: 1m0s

volumeStatsAggPeriod: 1m0s

EOF

cat > /usr/lib/systemd/system/kubelet.service <<'EOF'

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=containerd.service

Requires=containerd.service

[Service]

ExecStart=/usr/local/bin/kubelet

Restart=always

StartLimitInterval=0

RestartSec=10

[Install]

WantedBy=multi-user.target

EOF

cat > /etc/systemd/system/kubelet.service.d/10-kubelet.conf <<'EOF'

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/zhu/certs/kubeconfig/bootstrap-kubelet.kubeconfig --kubeconfig=/zhu/certs/kubeconfig/kubelet.kubeconfig"

Environment="KUBELET_CONFIG_ARGS=--config=/etc/kubernetes/kubelet-conf.yml"

Environment="KUBELET_SYSTEM_ARGS=--container-runtime-endpoint=unix:///run/containerd/containerd.sock"

Environment="KUBELET_EXTRA_ARGS=--node-labels=node.kubernetes.io/node='' "

ExecStart=

ExecStart=/usr/local/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_SYSTEM_ARGS $KUBELET_EXTRA_ARGS

EOF

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet

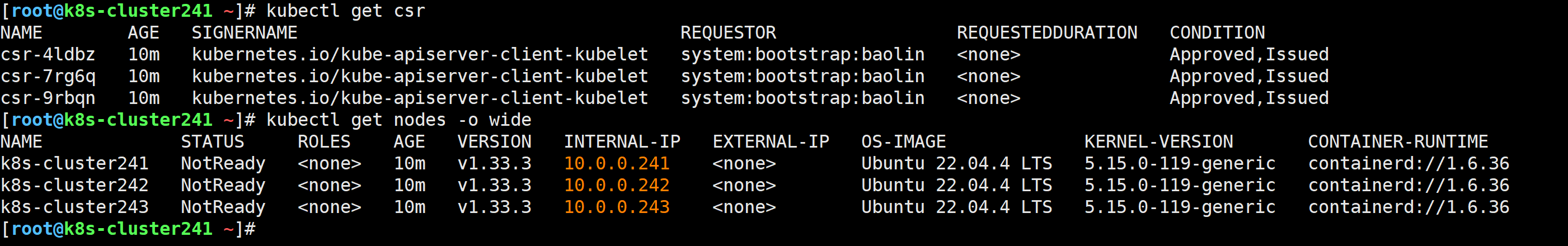

kubectl get csr

kubectl get nodes -o wide

cat > /etc/kubernetes/kube-proxy.yml << EOF

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

bindAddress: 0.0.0.0

metricsBindAddress: 127.0.0.1:10249

clientConnection:

acceptConnection: ""

burst: 10

contentType: application/vnd.kubernetes.protobuf

kubeconfig: /zhu/certs/kubeconfig/kube-proxy.kubeconfig

qps: 5

clusterCIDR: 10.100.0.0/16

configSyncPeriod: 15m0s

conntrack:

max: null

maxPerCore: 32768

min: 131072

tcpCloseWaitTimeout: 1h0m0s

tcpEstablishedTimeout: 24h0m0s

enableProfiling: false

healthzBindAddress: 0.0.0.0:10256

hostnameOverride: ""

iptables:

masqueradeAll: false

masqueradeBit: 14

minSyncPeriod: 0s

ipvs:

masqueradeAll: true

minSyncPeriod: 5s

scheduler: "rr"

syncPeriod: 30s

mode: "ipvs"

nodeProtAddress: null

oomScoreAdj: -999

portRange: ""

udpIdelTimeout: 250ms

EOF

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yml \

--v=2

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

[root@k8s-cluster241 ~]# etcdctl get "/" --prefix --keys-only | wc -l

512

[root@k8s-cluster241 ~]#

systemctl daemon-reload && systemctl enable --now kube-proxy

systemctl status kube-proxy

ss -ntl |egrep "10256|10249"

说明kube-proxy服务启动后,其实也会和api-server通信,并由api-server写入数据到etcd中

[root@k8s-cluster241 ~]# etcdctl get "/" --prefix --keys-only | wc -l

518

[root@k8s-cluster241 ~]#

参考链接:

https://docs.tigera.io/calico/latest/getting-started/kubernetes/quickstart#step-2-install-calico

[root@k8s-cluster241 ~]# cat > /etc/kubernetes/resolv.conf <<EOF

nameserver 223.5.5.5

options edns0 trust-ad

search .

EOF

[root@k8s-cluster241 ~]# data_rsync.sh /etc/kubernetes/resolv.conf

[root@k8s-cluster241 ~]# wget https://raw.githubusercontent.com/projectcalico/calico/v3.30.2/manifests/tigera-operator.yaml

[root@k8s-cluster241 ~]# wget https://raw.githubusercontent.com/projectcalico/calico/v3.30.2/manifests/custom-resources.yaml

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull quay.io/tigera/operator:v1.38.3

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/apiserver:v3.30.2

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/kube-controllers:v3.30.2

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/cni:v3.30.2

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/typha:v3.30.2

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/csi:v3.30.2

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/goldmane:v3.30.2

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/whisker:v3.30.2

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/pod2daemon-flexvol:v3.30.2

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/node-driver-registrar:v3.30.2

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/whisker-backend:v3.30.2

export http_proxy=http://10.0.0.1:7890 export https_proxy=http://10.0.0.1:7890; ctr -n k8s.io i pull docker.io/calico/node:v3.30.2

ctr -n k8s.io i export tigera-operator-v1.38.3.tar.gz quay.io/tigera/operator:v1.38.3

[root@k8s-cluster242 calico]# ls -1

calico-apiserver-v3.30.2.tar.gz

calico-cni-v3.30.2.tar.gz

calico-csi-v3.30.2.tar.gz

calico-goldmane-v3.30.2.tar.gz

calico-kube-controllers-v3.30.2.tar.gz

calico-node-driver-registrar-v3.30.2.tar.gz

calico-node-v3.30.2.tar.gz

calico-pod2daemon-flexvol-v3.30.2.tar.gz

calico-typha-v3.30.2.tar.gz

calico-whisker-backend-v3.30.2.tar.gz

calico-whisker-v3.30.2.tar.gz

tigera-operator-v1.38.3.tar.gz

for i in `ls -1`;do ctr -n k8s.io i import $i;done

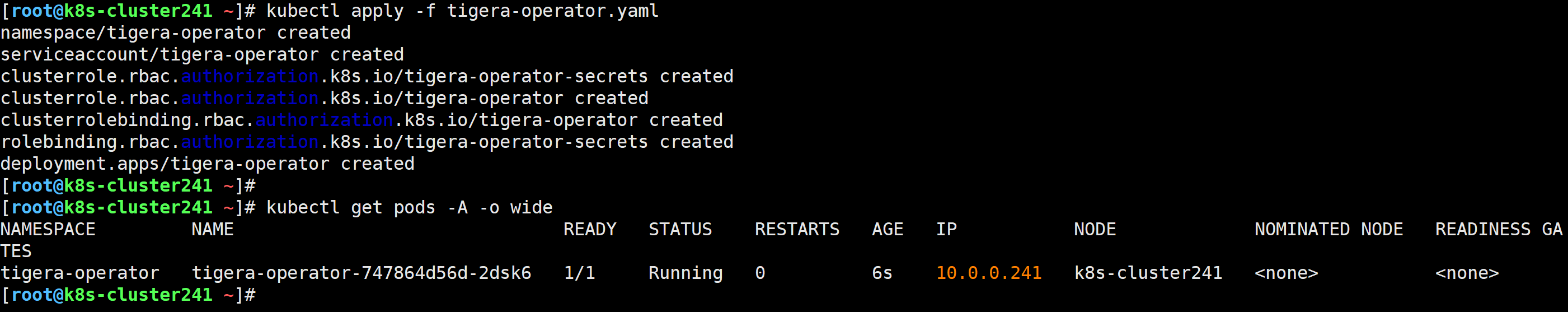

[root@k8s-cluster241 ~]# kubectl apply -f tigera-operator.yaml

namespace/tigera-operator created

serviceaccount/tigera-operator created

clusterrole.rbac.authorization.k8s.io/tigera-operator-secrets created

clusterrole.rbac.authorization.k8s.io/tigera-operator created

clusterrolebinding.rbac.authorization.k8s.io/tigera-operator created

rolebinding.rbac.authorization.k8s.io/tigera-operator-secrets created

deployment.apps/tigera-operator created

[root@k8s-cluster241 ~]# grep 16 custom-resources.yaml

cidr: 192.168.0.0/16

[root@k8s-cluster241 ~]# sed -i '/16/s#192.168#10.100#' custom-resources.yaml

[root@k8s-cluster241 ~]# grep 16 custom-resources.yaml

cidr: 10.100.0.0/16

[root@k8s-cluster241 ~]# grep blockSize custom-resources.yaml

blockSize: 26

[root@k8s-cluster241 ~]# sed -i '/blockSize/s#26#24#' custom-resources.yaml

[root@k8s-cluster241 ~]# grep blockSize custom-resources.yaml

blockSize: 24

[root@k8s-cluster241 ~]# kubectl create -f custom-resources.yaml

installation.operator.tigera.io/default created

apiserver.operator.tigera.io/default created

goldmane.operator.tigera.io/default created

whisker.operator.tigera.io/default created

[root@k8s-cluster241 ~]#

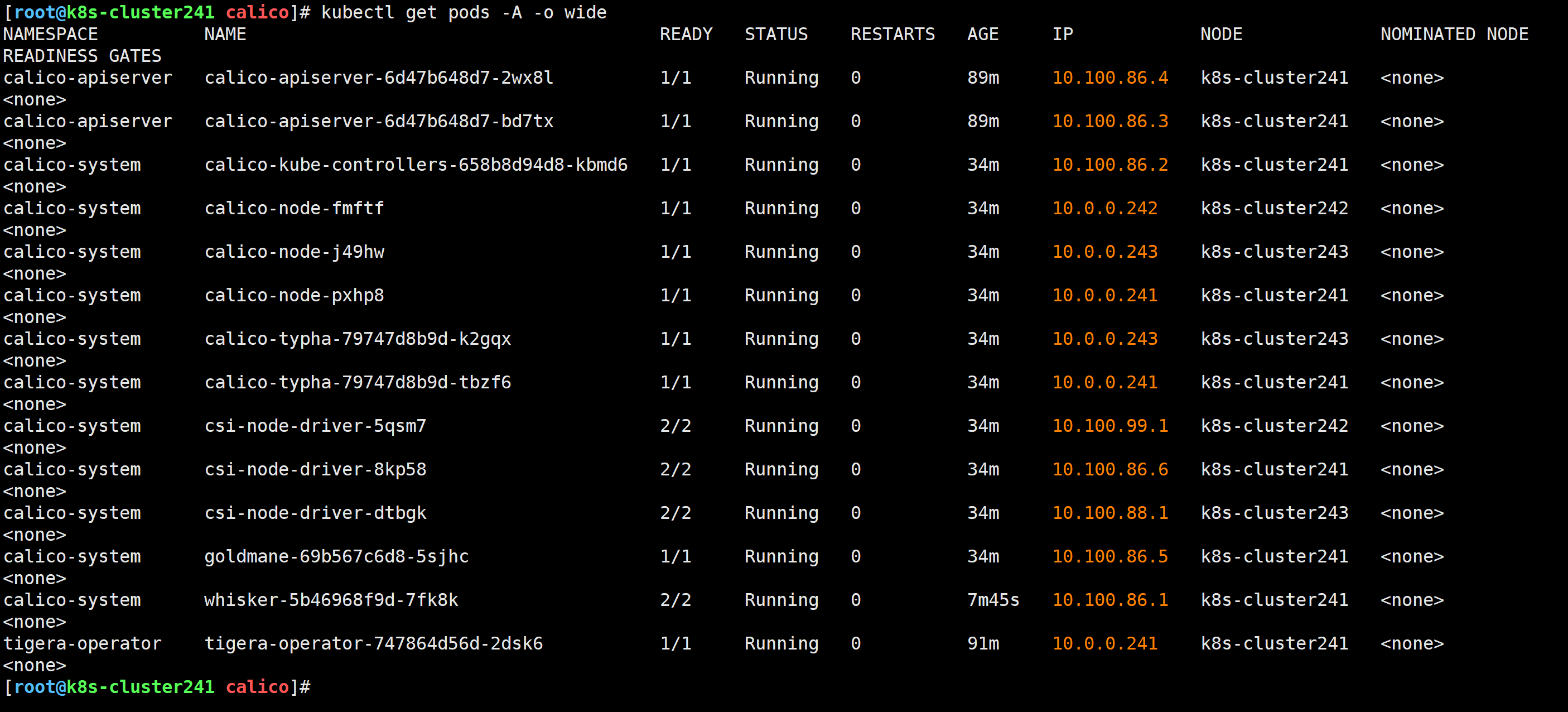

[root@k8s-cluster241 calico]# kubectl get pods -A -o wide

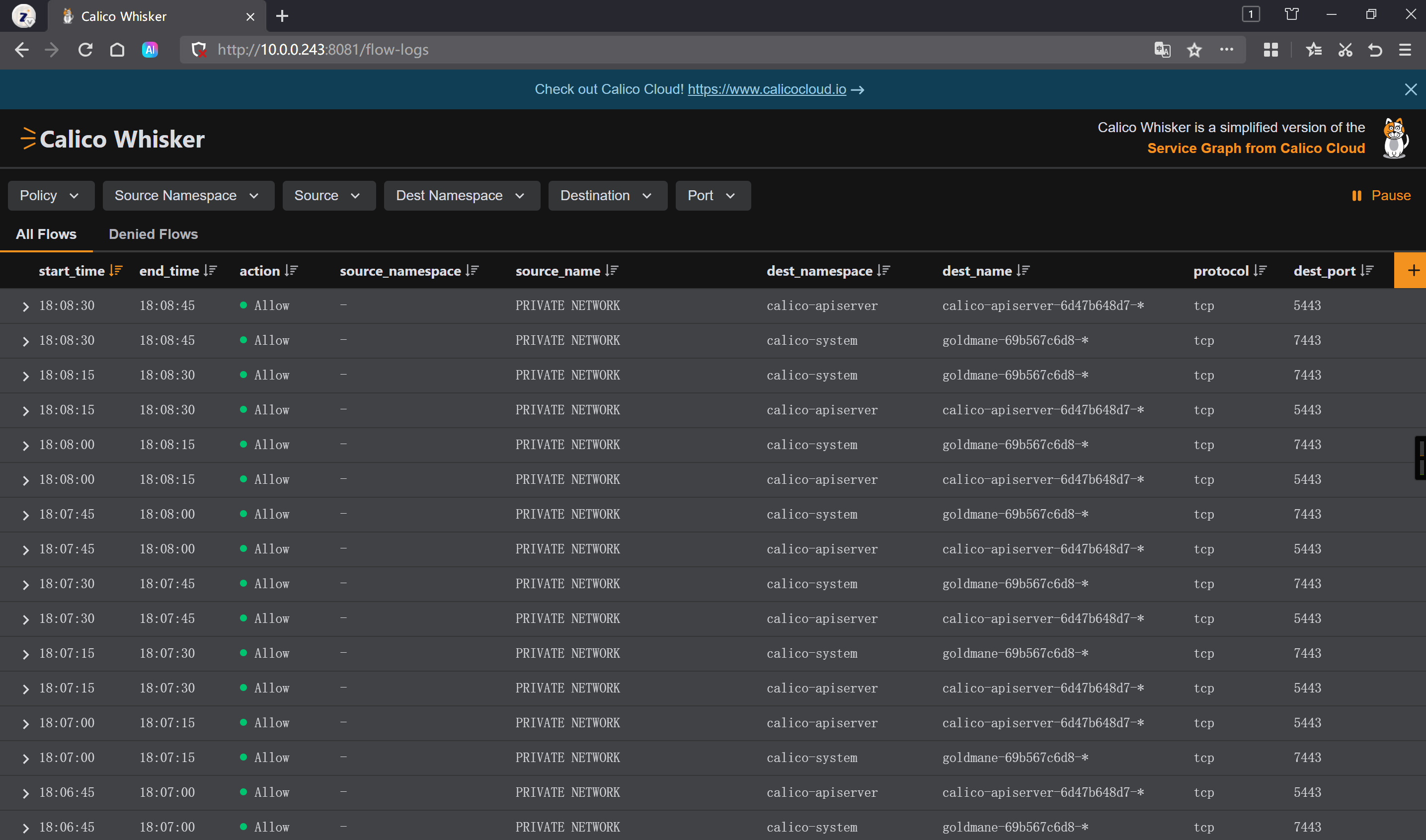

[root@k8s-cluster243 ~]# kubectl port-forward -n calico-system service/whisker 8081:8081 --address 0.0.0.0

Forwarding from 0.0.0.0:8081 -> 8081

http://10.0.0.243:8081/flow-logs

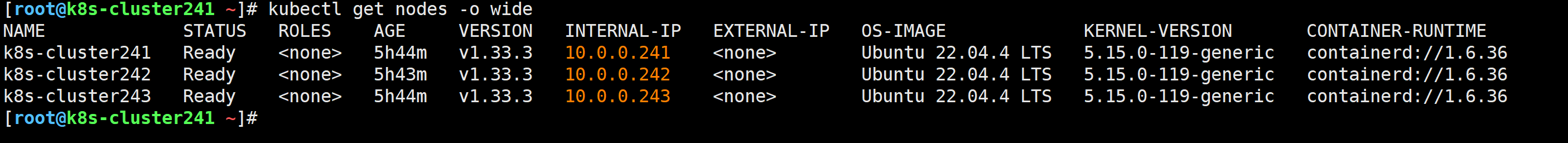

[root@k8s-cluster241 ~]# kubectl get nodes -o wide

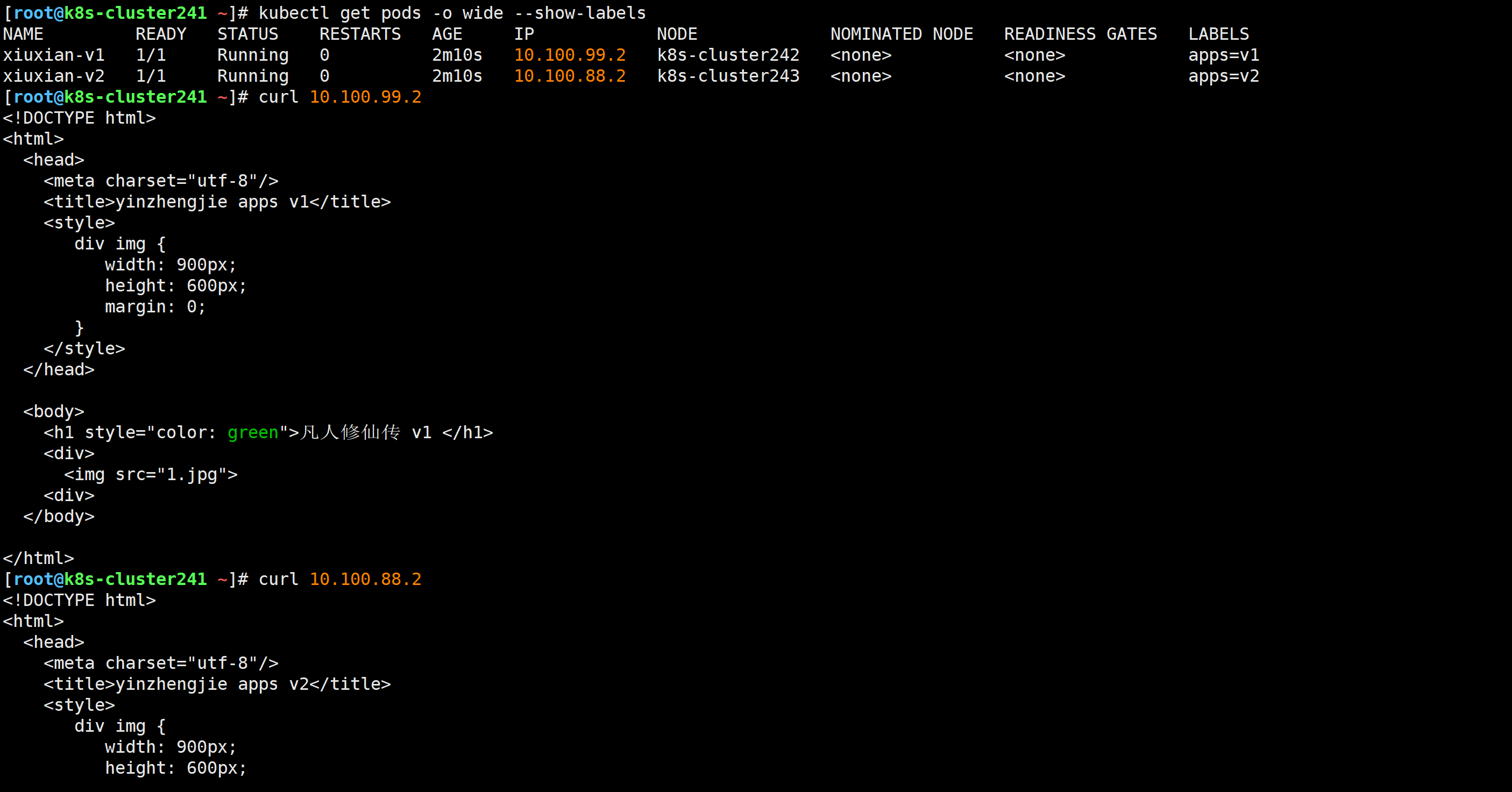

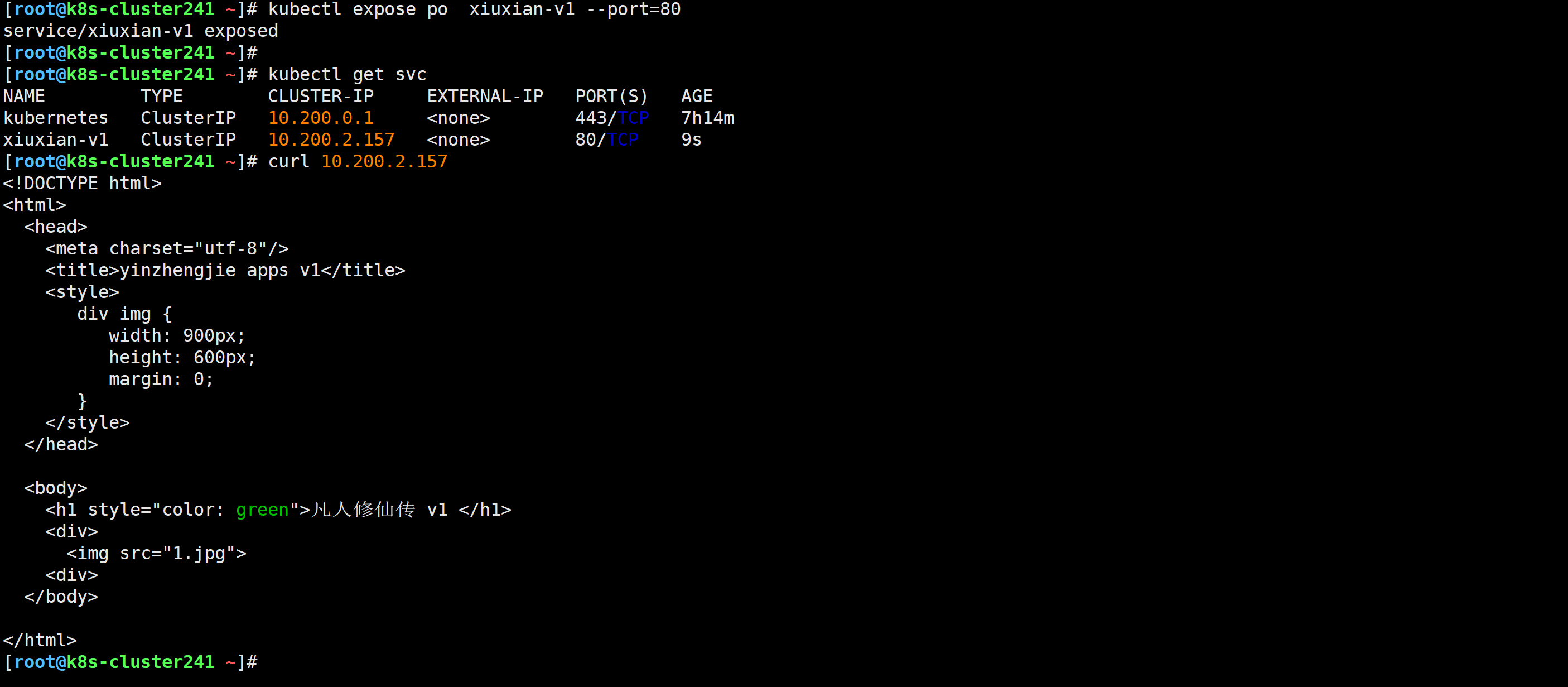

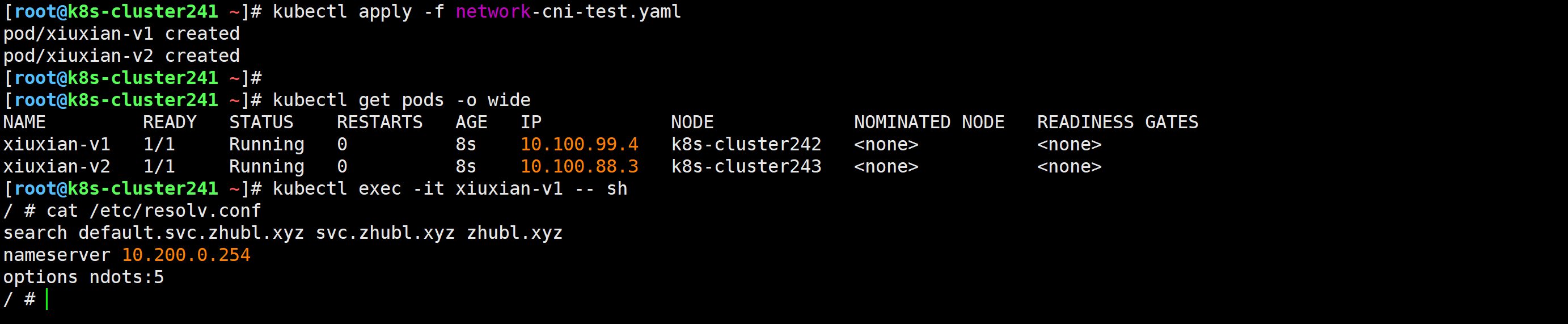

[root@k8s-cluster241 ~]# cat network-cni-test.yaml

apiVersion: v1

kind: Pod

metadata:

name: xiuxian-v1

labels:

apps: v1

spec:

nodeName: k8s-cluster242

containers:

- image: registry.cn-hangzhou.aliyuncs.com/yinzhengjie-k8s/apps:v1

name: xiuxian

---

apiVersion: v1

kind: Pod

metadata:

name: xiuxian-v2

labels:

apps: v2

spec:

nodeName: k8s-cluster243

containers:

- image: registry.cn-hangzhou.aliyuncs.com/yinzhengjie-k8s/apps:v2

name: xiuxian

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kubectl apply -f network-cni-test.yaml

pod/xiuxian-v1 created

pod/xiuxian-v2 created

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kubectl get pods -o wide --show-labels

[root@k8s-cluster241 ~]# curl 10.100.99.2

[root@k8s-cluster241 ~]# curl 10.100.88.2

[root@k8s-cluster241 ~]# kubectl expose po xiuxian-v1 --port=80

service/xiuxian-v1 exposed

温馨提示:

官方关于calico网络的Whisker组件流量监控是有出图效果的,可以模拟测试,但建议先部署CoreDNS组件。

参考链接:

https://github.com/kubernetes/kubernetes/tree/master/cluster/addons/dns/coredns

wget http://192.168.16.32/Resources/Kubernetes/Add-ons/CoreDNS/coredns.yaml.base

sed -i '/__DNS__DOMAIN__/s#__DNS__DOMAIN__#zhubl.xyz#' coredns.yaml.base

sed -i '/__DNS__MEMORY__LIMIT__/s#__DNS__MEMORY__LIMIT__#200Mi#' coredns.yaml.base

sed -i '/__DNS__SERVER__/s#__DNS__SERVER__#10.200.0.254#' coredns.yaml.base

相关字段说明:

DNS__DOMAIN

DNS自定义域名,要和你实际的K8S域名对应上。DNS__MEMORY__LIMIT

CoreDNS组件的内存限制。DNS__SERVER

DNS服务器的svc的CLusterIP地址。

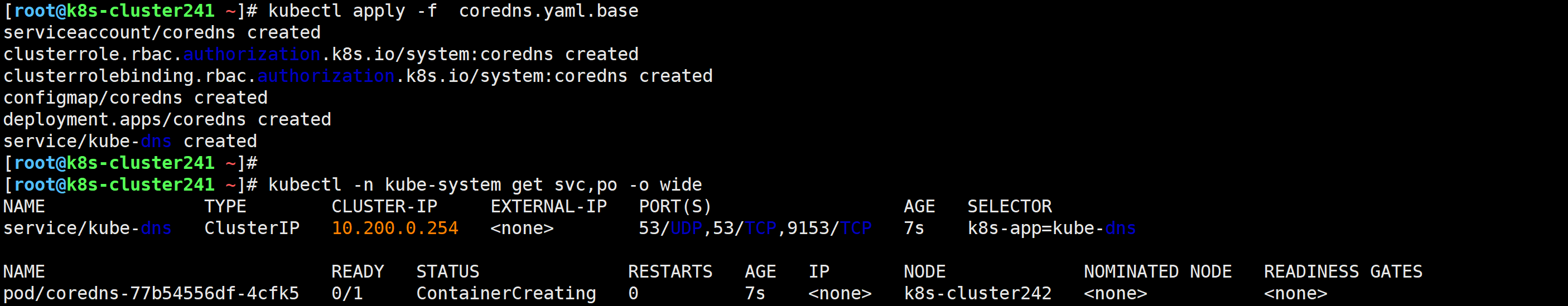

[root@k8s-cluster241 ~]# kubectl apply -f coredns.yaml.base

serviceaccount/coredns created

clusterrole.rbac.authorization.k8s.io/system:coredns created

clusterrolebinding.rbac.authorization.k8s.io/system:coredns created

configmap/coredns created

deployment.apps/coredns created

service/kube-dns created

温馨提示:

如果镜像下载失败,可以手动导入。操作如下

wget http://192.168.16.32/Resources/Kubernetes/Add-ons/CoreDNS/coredns-v1.12.3.tar.gz

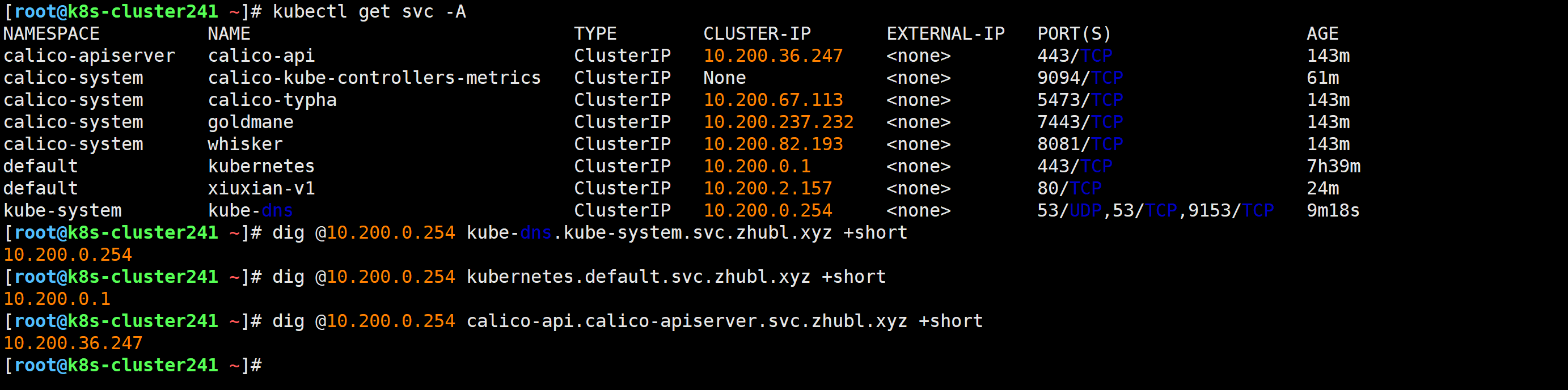

[root@k8s-cluster241 ~]# dig @10.200.0.254 kube-dns.kube-system.svc.zhubl.xyz +short

10.200.0.254

[root@k8s-cluster241 ~]# dig @10.200.0.254 kubernetes.default.svc.zhubl.xyz +short

10.200.0.1

[root@k8s-cluster241 ~]# dig @10.200.0.254 calico-api.calico-apiserver.svc.zhubl.xyz +short

10.200.36.247

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kubectl exec -it xiuxian-v1 -- sh

/ # cat /etc/resolv.conf

search default.svc.zhubl.xyz svc.zhubl.xyz zhubl.xyz

nameserver 10.200.0.254

options ndots:5

/ #

[root@k8s-cluster241 ~]# kubectl delete -f network-cni-test.yaml

pod "xiuxian-v1" deleted

pod "xiuxian-v2" deleted

[root@k8s-cluster241 ~]# kubectl get pods -o wide

No resources found in default namespace.

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# grep mode /etc/kubernetes/kube-proxy.yml

mode: "ipvs"

[root@k8s-cluster242 ~]# grep mode /etc/kubernetes/kube-proxy.yml

mode: "ipvs"

[root@k8s-cluster243 ~]# grep mode /etc/kubernetes/kube-proxy.yml

mode: "ipvs"

[root@k8s-cluster243 ~]#

wget http://192.168.16.32/Resources/Kubernetes/Add-ons/metallb/v0.15.2/metallb-controller-v0.15.2.tar.gz

wget http://192.168.16.32/Resources/Kubernetes/Add-ons/metallb/v0.15.2/metallb-speaker-v0.15.2.tar.gz

ctr -n k8s.io i import metallb-controller-v0.15.2.tar.gz

ctr -n k8s.io i import metallb-speaker-v0.15.2.tar.gz

wget https://raw.githubusercontent.com/metallb/metallb/v0.15.2/config/manifests/metallb-native.yaml

或

wget http://192.168.16.32/Resources/Kubernetes/Add-ons/metallb/v0.15.2/metallb-native.yaml

[root@k8s-cluster241 ~]# kubectl apply -f metallb-native.yaml

namespace/metallb-system created

customresourcedefinition.apiextensions.k8s.io/bfdprofiles.metallb.io created

customresourcedefinition.apiextensions.k8s.io/bgpadvertisements.metallb.io created

customresourcedefinition.apiextensions.k8s.io/bgppeers.metallb.io created

customresourcedefinition.apiextensions.k8s.io/communities.metallb.io created

customresourcedefinition.apiextensions.k8s.io/ipaddresspools.metallb.io created

customresourcedefinition.apiextensions.k8s.io/l2advertisements.metallb.io created

customresourcedefinition.apiextensions.k8s.io/servicebgpstatuses.metallb.io created

customresourcedefinition.apiextensions.k8s.io/servicel2statuses.metallb.io created

serviceaccount/controller created

serviceaccount/speaker created

role.rbac.authorization.k8s.io/controller created

role.rbac.authorization.k8s.io/pod-lister created

clusterrole.rbac.authorization.k8s.io/metallb-system:controller created

clusterrole.rbac.authorization.k8s.io/metallb-system:speaker created

rolebinding.rbac.authorization.k8s.io/controller created

rolebinding.rbac.authorization.k8s.io/pod-lister created

clusterrolebinding.rbac.authorization.k8s.io/metallb-system:controller created

clusterrolebinding.rbac.authorization.k8s.io/metallb-system:speaker created

configmap/metallb-excludel2 created

secret/metallb-webhook-cert created

service/metallb-webhook-service created

deployment.apps/controller created

daemonset.apps/speaker created

validatingwebhookconfiguration.admissionregistration.k8s.io/metallb-webhook-configuration created

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# cat metallb-ip-pool.yaml

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: zhubaolin

namespace: metallb-system

spec:

addresses:

# 注意改为你自己为MetalLB分配的IP地址

- 10.0.0.150-10.0.0.180

---

apiVersion: metallb.io/v1beta1

kind: L2Advertisement

metadata:

name: zhu

namespace: metallb-system

spec:

ipAddressPools:

- zhubaolin

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kubectl apply -f metallb-ip-pool.yaml

ipaddresspool.metallb.io/zhubaolin created

l2advertisement.metallb.io/zhu created

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# kubectl get ipaddresspools.metallb.io -A

NAMESPACE NAME AUTO ASSIGN AVOID BUGGY IPS ADDRESSES

metallb-system zhubaolin true false ["10.0.0.150-10.0.0.180"]

[root@k8s-cluster241 ~]#

[root@k8s-cluster241 ~]# cat deploy-svc-LoadBalancer.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: deploy-xiuxian

spec:

replicas: 3

selector:

matchLabels:

apps: v1

template:

metadata:

labels:

apps: v1

spec:

containers:

- name: c1

image: registry.cn-hangzhou.aliyuncs.com/yinzhengjie-k8s/apps:v3

---

apiVersion: v1

kind: Service

metadata:

name: svc-xiuxian

spec:

type: LoadBalancer

selector:

apps: v1

ports:

- port: 80

[root@k8s-cluster241 ~]#

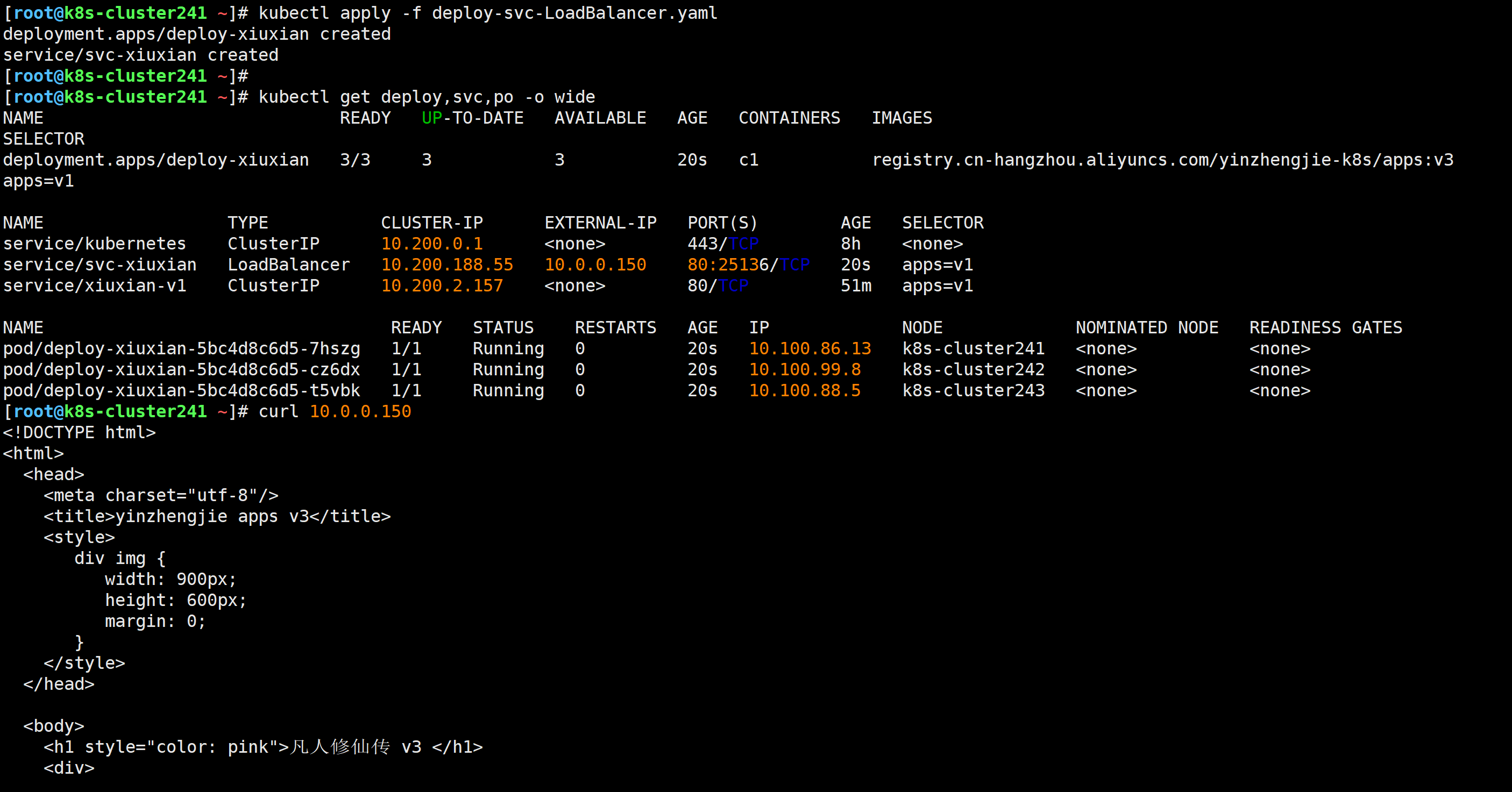

[root@k8s-cluster241 ~]# kubectl apply -f deploy-svc-LoadBalancer.yaml

deployment.apps/deploy-xiuxian created

service/svc-xiuxian created

[root@k8s-cluster241 ~]#

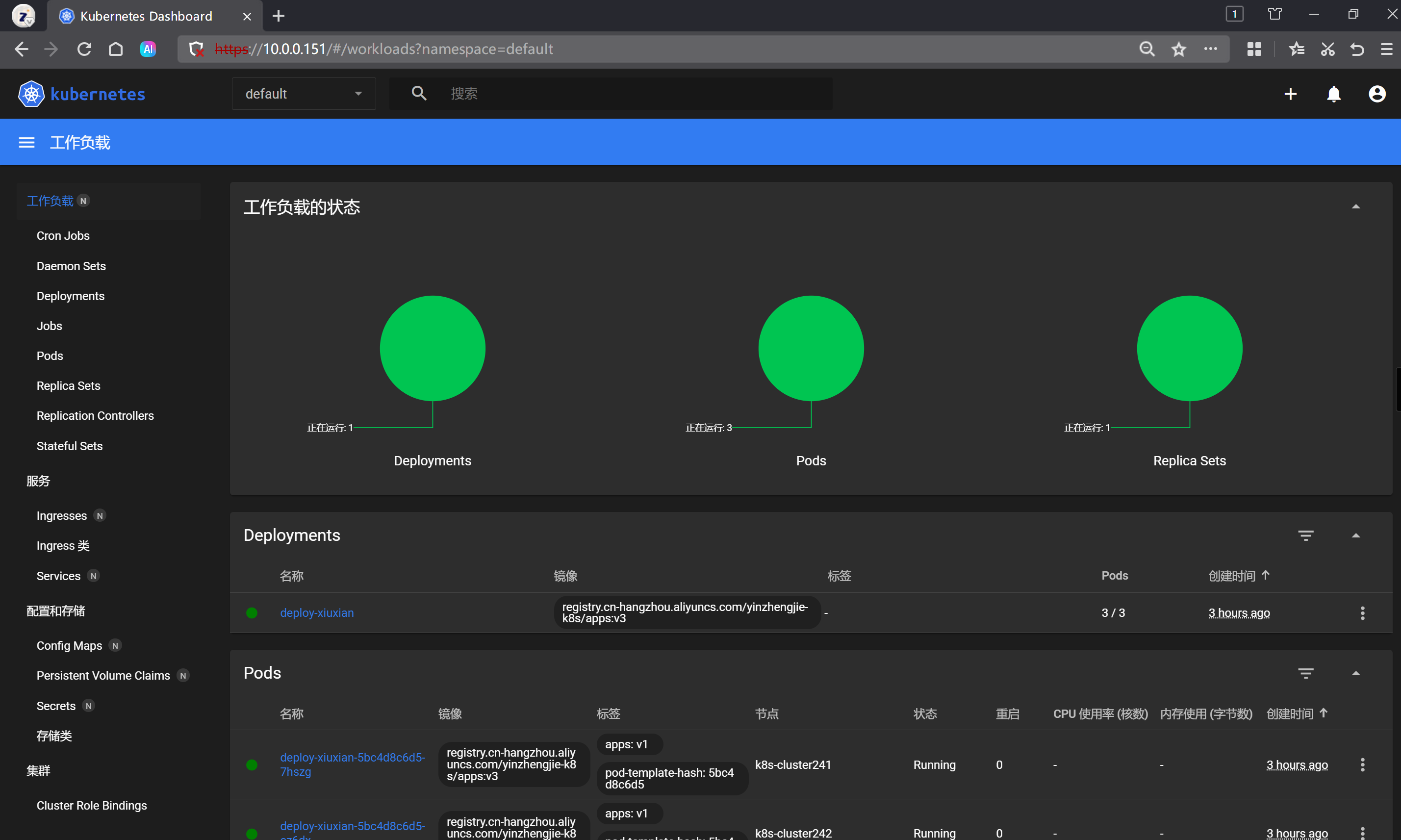

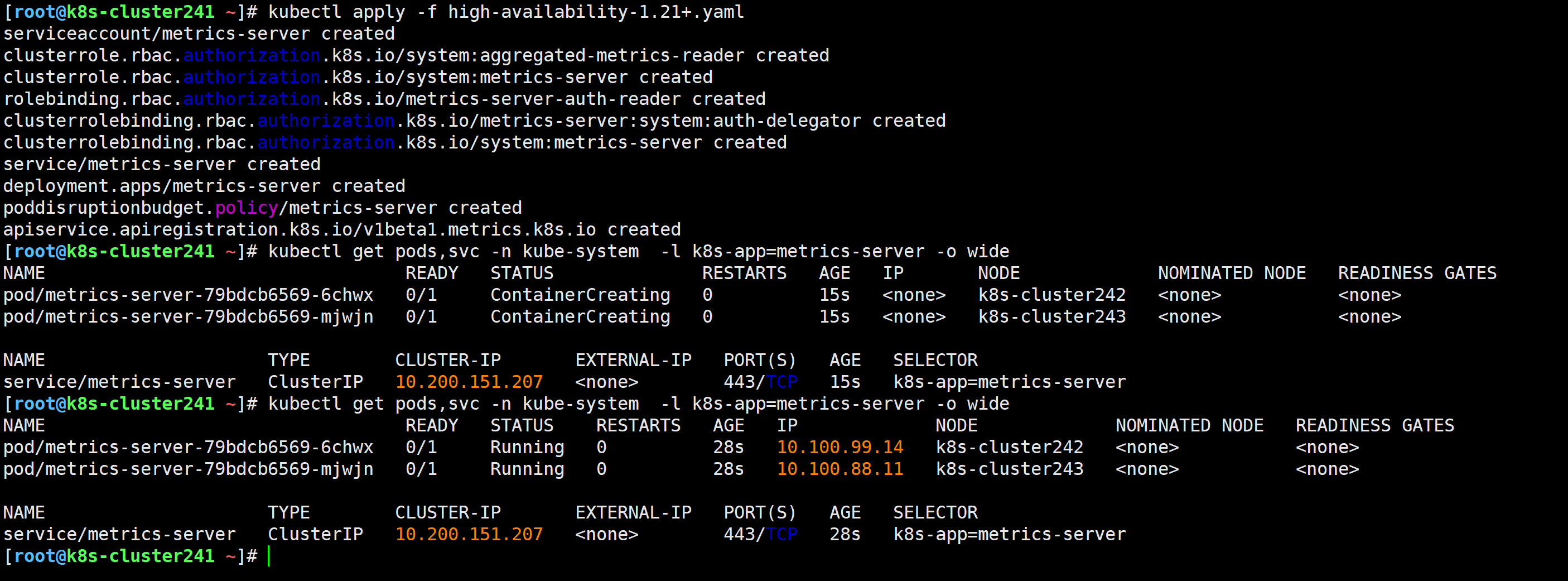

[root@k8s-cluster241 ~]# kubectl get deploy,svc,po -o wide

[root@k8s-cluster241 ~]# curl 10.0.0.150

wget https://get.helm.sh/helm-v3.18.4-linux-amd64.tar.gz

或

wget http://192.168.16.32/Resources/Kubernetes/Add-ons/helm/softwares/helm-v3.18.4-linux-amd64.tar.gz

[root@k8s-cluster241 ~]# tar xf helm-v3.18.4-linux-amd64.tar.gz -C /usr/local/bin/ linux-amd64/helm --strip-components=1

[root@k8s-cluster241 ~]# ll /usr/local/bin/helm

-rwxr-xr-x 1 1001 fwupd-refresh 59715768 Jul 9 04:36 /usr/local/bin/helm*

[root@k8s-cluster241 ~]# helm version

version.BuildInfo{Version:"v3.18.4", GitCommit:"d80839cf37d860c8aa9a0503fe463278f26cd5e2", GitTreeState:"clean", GoVersion:"go1.24.4"}

[root@k8s-cluster241 ~]#

helm completion bash > /etc/bash_completion.d/helm

source /etc/bash_completion.d/helm

echo 'source /etc/bash_completion.d/helm' >> ~/.bashrc

参考链接:

[root@k8s-cluster241 ~]# helm repo add kubernetes-dashboard https://kubernetes.github.io/dashboard/

"kubernetes-dashboard" has been added to your repositories

[root@k8s-cluster241 ~]# helm repo list

NAME URL

kubernetes-dashboard https://kubernetes.github.io/dashboard/

镜像下载地址:http://192.168.16.32/Resources/Kubernetes/Add-ons/dashboard/helm/v7.13.0/images/

export https_proxy=http://10.0.0.1:7890

export http_proxy=http://10.0.0.1:7890

ctr -n k8s.io i pull docker.io/kubernetesui/dashboard-api:1.13.0

ctr -n k8s.io i pull docker.io/kubernetesui/dashboard-auth:1.3.0

ctr -n k8s.io i pull docker.io/library/kong:3.8

ctr -n k8s.io i pull docker.io/kubernetesui/dashboard-metrics-scraper:1.2.2

ctr -n k8s.io i pull docker.io/kubernetesui/dashboard-web:1.7.0

ctr -n k8s.io i export kubernetesui-dashboard-api-1.13.0.tar.gz docker.io/kubernetesui/dashboard-api:1.13.0

ctr -n k8s.io i export kubernetesui-dashboard-auth-1.3.0.tar.gz docker.io/kubernetesui/dashboard-auth:1.3.0